Redesigning Florida's School Report Cards

The Foundation for Excellence in Education, an organization that advocates for education reform in Florida, in particular the set of policies sometimes called the "Florida Formula," recently announced a competition to redesign the “appearance, presentation and usability” of the state’s school report cards. Winners of the competition will share prize money totaling $35,000.

The contest seems like a great idea. Improving the manner in which education data are presented is, of course, a laudable goal, and an open competition could potentially attract a diverse group of talented people. As regular readers of this blog know, however, I am not opposed to sensibly-designed test-based accountability policies, but my primary concern about school rating systems is focused mostly on the quality and interpretation of the measures used therein. So, while I support the idea of a competition for improving the design of the report cards, I am hoping that the end result won't just be a very attractive, clever instrument devoted to the misinterpretation of testing data.

In this spirit, I would like to submit four simple graphs that illustrate, as clearly as possible and using the latest data from 2014, what Florida’s school grades are actually telling us. Since the scoring and measures vary a bit between different types of schools, let’s focus on elementary schools.

Half of the points a school can earn are based on measures that tell us virtually nothing about the actual test-based effectiveness of a school.

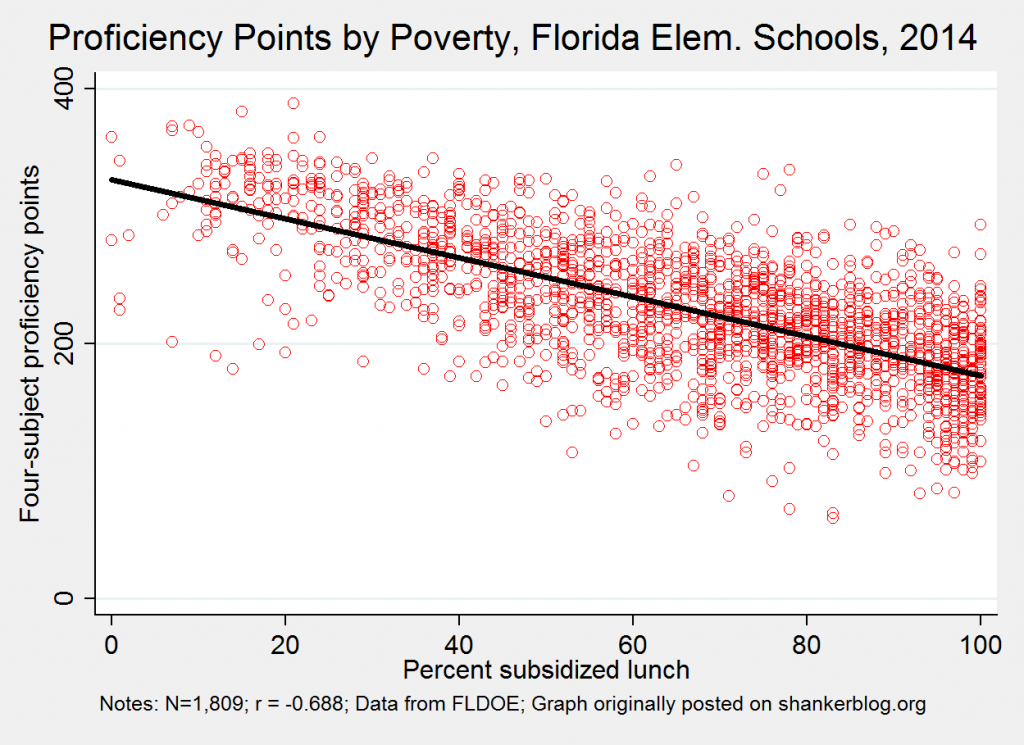

Elementary schools can earn up to 400 points (out of 800 total) based on the (adjusted) proportion of their students who score proficient or higher in four subjects: Reading, math, writing and science.

There can be no dispute that how highly students score on tests is mostly a function of measurable and unmeasurable non-school factors (e.g., family background, error, etc.), rather than which school they attend. In other words, simple proficiency rates describe student performance far more than they provide any indication of schools’ contributions to it. One useful, albeit incomplete way to illustrate this is to present/calculate the relationship between the rates and key student characteristics, such as poverty. In the scatterplot below, each red dot is an elementary school. The horizontal axis is the percent of students in each school eligible for subsidized lunch, a rough proxy for income/poverty. The vertical axis is the total number of points schools earn from proficiency in these four subjects. The black line is the "average relationship" between these two variables.

There is a rather strong bivariate association between poverty and proficiency points (and it would be even stronger but for the relatively weak correlation between poverty and writing rates). With relatively few exceptions, schools with low poverty rates earn between 300-400 points, whereas virtually all of the the poorest schools receive between 100-300 points.

If the newly-designed reports are to improve the interpretation of these measures, they should be presented and/or visualized as student rather than school performance indicators, which can be useful in identifying schools serving large proportions of students who need extra assistance (or, for parents, schools in which their children would be surrounding by higher-performing peers).

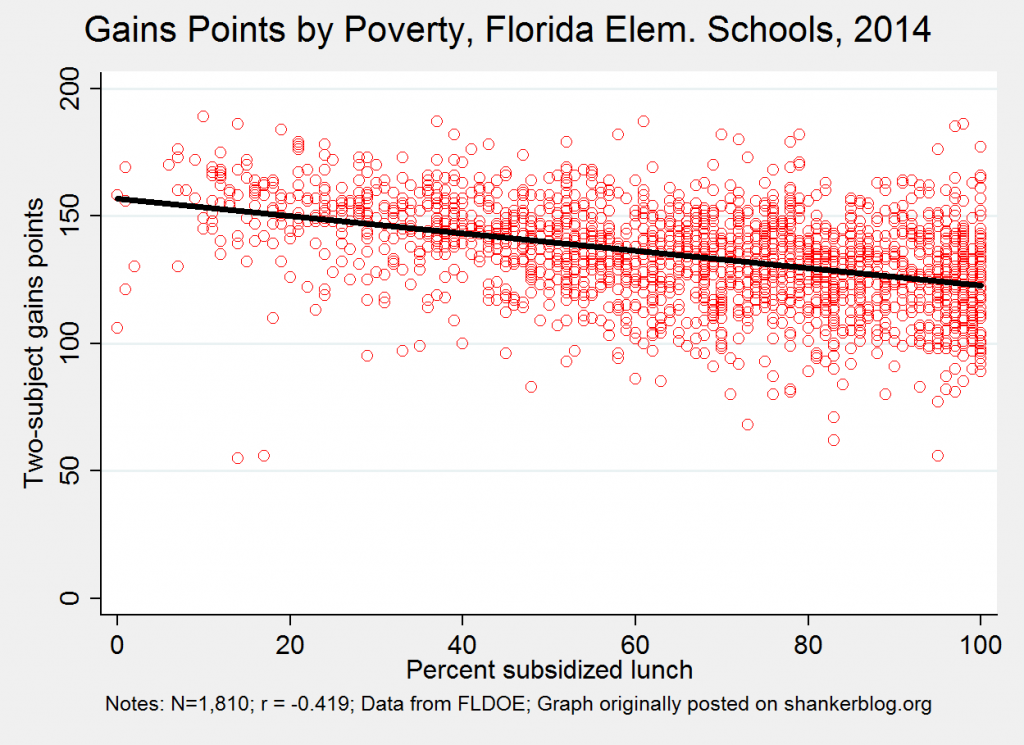

Another 25 percent of the grades are determined by two "gains" measures that, by their design, are quite redundant with proficiency rates, and thus provide only vague information about schools' actual (test-based) effectiveness.

Implemented and interpreted properly, growth models are a viable, though inevitably imperfect option for gauging actual school effectiveness (in raising test scores). In Florida, elementary schools can earn a total of 200 possible points for the percent of all students “making gains” in math and reading.

The problem with these measures is that the state counts students as “making gains” if they make progress OR if they were proficient or advanced last year (in this case, 2013), and remain proficient or advanced the next year (in this case, 2014), without moving down a category. This means that schools with high proficiency rates have a huge advantage (the correlation between "gains" points and proficiency points is about 0.67), and these two “gains” measures are themselves associated with student characteristics such as poverty. You can see that in the second scatterplot, below.

The correlation, of course, is not as strong as that between proficiency and poverty, but there is a significant relationship between school poverty and “gains” points. With relatively few exceptions, the schools with the lowest poverty rates score highly, whereas poorer schools tend to receive lower point totals.

In other words, these "gains" measures are basically indicating (crudely, by the way) the proportion of students who are making progress PLUS the proportion who already meet proficiency standards. I suppose the new report cards might characterize this as a hybrid of school and student performance, but it's a little tough to separate one from the other. In my personal opinion, this measure is not particularly useful as currently calculated.*

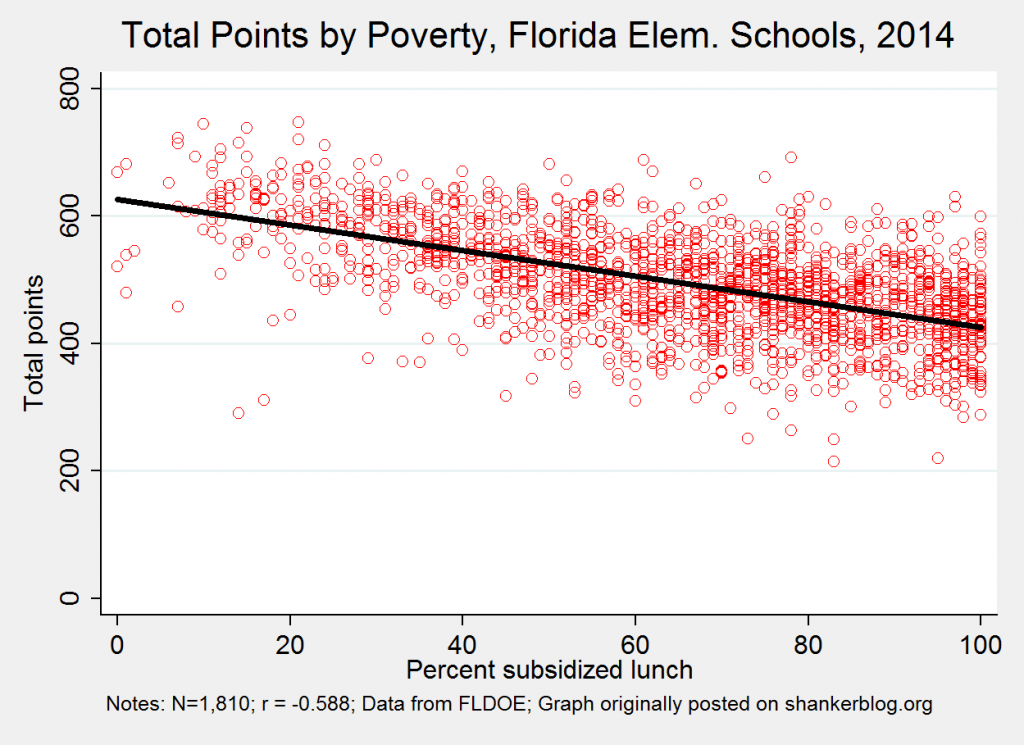

The total number of points schools earn is thus rather strongly correlated with student characteristics.

You can see this in the third scatterplot, below, which presents the relationship between total points and school poverty.

Once again, schools’ point totals (this time, out of 800) decline pretty sharply, on average, as poverty rates go up. All but a handful of the schools with the lowest poverty rates receive between 550-750 points, while the vast majority of the poorest schools score between 250-450. Again, it's very difficult to tell how much of each school's point total is due to its effectiveness (in raising scores), rather than the students it serves. One could ostensibly present these data (in a report card) in a manner emphasizing whether the school scores higher or lower than schools serving similar populations (e.g., in the graph above, whether they're above or below the black line).

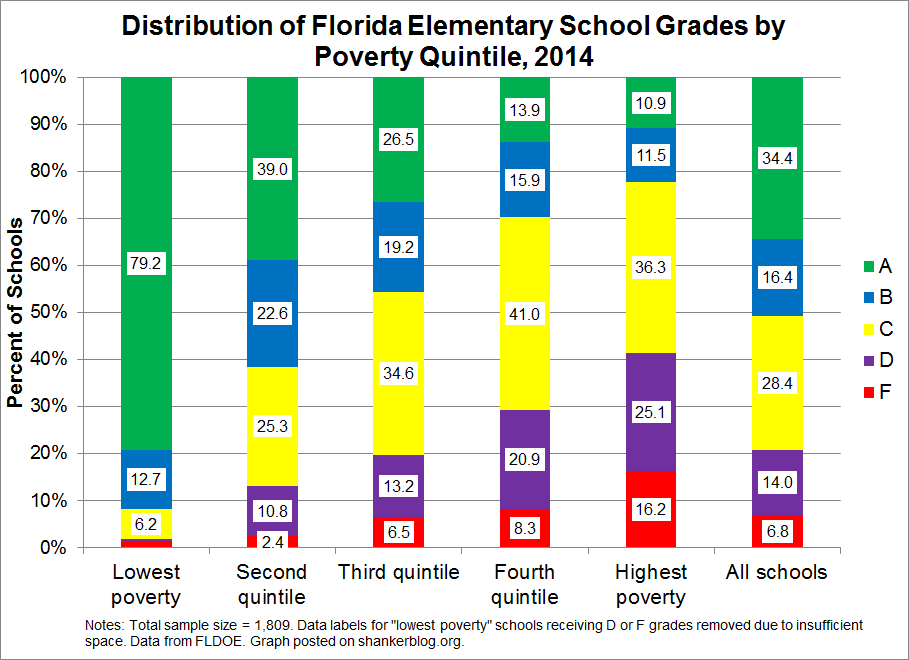

The end result of the Florida grading system is that the schools with the lowest poverty rates are almost guaranteed a good grade, and most of the poorest schools receive a grade of C or lower.

When final point totals are sorted into A-F letter grades, the results broken down by school poverty are rather dramatic. The final graph, below, presents the distribution of grades for schools in five poverty quintiles – the 20 percent of schools with the lowest poverty rates, the next 20 percent, and so on.

Over 90 percent of schools with the lowest poverty rates receive grades of A or B, while over half of the poorest schools are given a C or lower. In other words, according to Florida’s grading system, the overwhelming majority of low-performing schools in the state have poverty rates above the state median, and virtually every school with a low poverty rate is a high performer.

It is perfectly reasonable to expect to find disproportionate numbers of high performing schools in wealthier neighborhoods, as these schools tend to have lower teacher turnover, better funding, etc. But these Florida results are implausible, and, importantly, it’s very easy to identify the reason why – it's due to the measures chosen. This is baked into the system (and note that this issue is not at all exclusive to Florida's system).

Does this mean the grades as currently calculated are useless? No, I don’t think so. It all depends on how the information is interpreted and used (see this post for more discussion). The problem is that the information is, more often than not, misinterpreted and misused.

Accordingly, when it comes to the aforementioned competition to redesign the state’s school report cards, it's not exactly easy to believe that the winning designs will substantially improve the situation. I say this not because improvement is impossible, or because I doubt the talents of the entrants, or because I wouldn't be thrilled to be proven incorrect. Rather, it's because improvement would require the selection of designs embodying interpretations of the data that are fundamentally at odds with those regularly put forth by the foundation running the contest, not to mention the Florida DOE.

Winners that generate true substantive improvement might, for example, include a clear explanation of the fact that the grades schools earn are based on measures that are largely out of their control - i.e., they are more student than school report cards. Instead of fetishizing the single A-F grade as some kind of comprehensive measure, there should be heavy emphasis on providing different interpretations of each measure, as well as the overall grades, for different groups of consumers (e.g., parents, administrators, policymakers, etc.). And there might be some effort to flag the many dozens of the schools tagged with D and F grades that are actually extremely effective in improving the performance of their students (who are, by the way, the students most in need of that improvement).

Overall, though, my opinion (and that's all it is) is that Florida would be well-advised to consider overhauling how its grades are calculated before redesigning how they are presented to the public.

- Matt Di Carlo

*****

* The final 25 percent of Florida's elementary school grades, and the only component that I would regard as a useful measure of actual school effectiveness, is the proportion of low-scoring students "making gains." This is because the "proficiency exemption" does not apply here, since the vast majority if any of these low-scoring students come in above the proficiency threshold.

Excellent points on how even growth and poverty can be correlated. As I wrote earlier this week, I doubt that even the most talented graphics designer can put student test scores in the context of a school's demographics.

http://educationbythenumbers.org/content/even-education-data-geeks-agre…

The devil is always in the statistical details and I like the way you broke down the schools by quintiles. But I'm not clear why it's "implausible" that Florida's poorest schools might have the worst teachers and administrators? That's exactly the point that the plaintiffs in the California Vegara case made.

Also, I believe the design competition is to redesign any generic school report card, not a Florida one specifically. Here are the competition details...

http://myschoolinfochallenge.com/wp-content/uploads/Designer-Info-Packe…