Dispatches From The Nexus Of Bad Research And Bad Journalism

In a recent story, the New York Daily News uses the recently-released teacher data reports (TDRs) to “prove” that the city’s charter school teachers are better than their counterparts in regular public schools. The headline announces boldly: New York City charter schools have a higher percentage of better teachers than public schools (it has since been changed to: "Charters outshine public schools").

Taking things even further, within the article itself, the reporters note, “The newly released records indicate charters have higher performing teachers than regular public schools."

So, not only are they equating words like “better” with value-added scores, but they’re obviously comfortable drawing conclusions about these traits based on the TDR data.

The article is a pretty remarkable display of both poor journalism and poor research. The reporters not only attempted to do something they couldn’t do, but they did it badly to boot. It’s unfortunate to have to waste one’s time addressing this kind of thing, but, no matter your opinion on charter schools, it's a good example of how not to use the data that the Daily News and other newspapers released to the public.

Beyond the two statements mentioned above – the headline(s) and the quoted sentence – the article includes no other discussion of the data on which they’re basing their claims. They seem to be supported by statistics presented in a graphic. As of my writing this, however, the graphic doesn’t load on the newspaper’s webpage, so I’m not positive what it includes. It’s a decent bet that the reporters present a graph or simple table showing the percentage of teachers rated “high” or “above average” by the city’s performance categories, for both charters and regular public schools (or, perhaps, the number of schools with a certain proportion of these teachers).

Even if we take the city’s (flawed) performance categories at face value, the original headline is only half true. In 2010, a larger proportion of charter teachers, compared with their regular public school counterparts, are rated, by the city’s scheme, “high” or “above average” in math, while a lower proportion receive those ratings in ELA.

However, we can’t take the performance categories – or the Daily News' “analysis” of them – at face value. Their approach has one virtue – it’s easy to understand, and easy to do. But it has countless downsides, one of them being that it absolutely cannot prove – or even really suggest – what they’re saying it proves. I don't know if the city's charter teachers have higher value-added scores. It's an interesting question (by my standards, at least), but the Daily News doesn't address it meaningfully.

Though far from the only one, the reporters' biggest problem was right in front of them. The article itself notes that only about half (32) of the city’s charter schools chose to participate in the rating program (it was voluntary for charters). This is actually the total number of participating schools in 2008, 2009 and 2010, most of which rotated in and out of the program each year. It’s apparently lost on these reporters that only a minority of charters participating means that the charter teachers in the TDR data do not necessarily reflect the population overall. This issue by itself renders their assertions invalid and irresponsible.

There are only about 50-60 charter school teachers in the 2010 sample, spread over 18 schools, a small fraction of the totals throughout the city. There are even fewer in 2009 and only a tiny handful in 2008. We cannot draw any conclusions about charter school teachers in the city based on this simple analysis, to say nothing of the bold statements in the article (even a more thorough, sophisticated analysis would be severely limited by the tiny samples).

And that’s not all. Somewhat surprisingly (at least to me), over one-third (37 percent) of charter teachers in the 2010 data are coded as co-teaching, compared with only about 12 percent of regular public school teachers. In fact, in five charter schools, every single teacher in the dataset is a co-teacher (though, again, there are only a few teachers in most of these schools). It's unclear whether these estimates are comparable to those for single-teacher classrooms, no matter how you compare them (especially since the data don’t tell us experience for co-teachers – they’re simply coded as co-teachers). This is another potentially serious complication.

So too is the fact that the value-added scores for the city’s charter school teachers are based on smaller samples (i.e., number of students), and are thus less precisely-estimated. For example, the average 2010 sample for a regular public school teacher is about 30 percent larger in math, and almost 50 percent larger in ELA. The city’s “performance” categories, upon which the Daily News' "analysis" (or at least what I imagine it to be) is based, ignore this (they also use percentile ranks that are calculated within experience groups, and the experience distribution varies drastically between the two types of schools).

Frankly, given this imprecision, the variable issues and the tiny sample of charter teachers, I'm not even very comfortable saying these data reflect the value-added scores of tested charter teachers in these few schools.

Nevertheless, I would like to quickly make the comparison using more appropriate (but still very simple and limited) models, but only to highlight how little it tells us. I’ll also replicate them with and without co-teachers, so we can take a look at that too.*

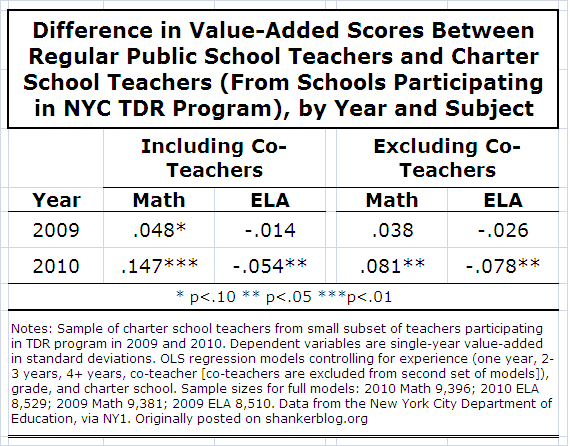

The numbers in the table below are for illustrative purposes only. They represent the estimated difference in value-added score (in standard deviations) between regular public school teachers and the small group of their counterparts that were in schools participating in the TDR program, controlling for a couple of other variables in the datasets. If the numbers are positive, charter teachers are higher. There are separate comparisons for each year and subject.**

In the models that include co-teachers, in 2010 math, there is a rather large, statistically significant (at any conventional level) difference between charter and regular public school teachers – about 0.15 standard deviations. We find the opposite situation in 2010 ELA. Regular public school teachers’ value-added scores are significantly higher by about 0.05 standard deviations.***

But, for our purposes, the important result here is the comparison between 2009 and 2010 (that between the two numbers in each column of the table). The estimates are very different. Some of this is just noise, but it is also a result of the aforementioned fact that there are different schools – and different teachers – included in the data in each year, so the estimates fluctuate (only a couple of schools are in the data in both years). It’s impossible to say what the results would look like if all charter teachers were included (and that comparison would have to be much more thorough than mine).

The estimates also vary depending on whether one includes co-teachers (in 2010 only as there were relatively few co-teachers in the 2009 data). When they're excluded, charter school teachers’ value-added is higher in math, and lower in ELA, by roughly equal margins in 2010. So, there’s another potential problem in making this comparison using these data (it may also be an issue for the models themselves, but that's a separate discussion).

Overall, then, bearing in mind all the huge caveats above, here’s a fair summary of these results: Value-added scores of the 50 or 60 charter school teachers in the 2010 data seem to be higher in math, and lower in ELA, but, due to the fact that there are so few charter teachers included from so few schools, as well as other issues, such as co-teaching and differences in sample sizes, we can’t really say that with too much confidence. And we certainly can’t reach even tentative general conclusions about the value-added scores of teachers in the two types of NYC schools.

Unless, of course, we’re the New York Daily News.

- Matt Di Carlo

*****

* A few somewhat tedious details about the data: I merged the newly-released charter data in with those for the city’s regular public school teachers, separating teachers in charters from teachers in the city’s “District 75” schools. The dependent variable is the actual value-added score (in standard deviations) of each teacher. There were simply not enough charter teachers to use the multi-year value-added scores, so I limit my analysis to the single-year estimates. The city’s actual value-added models do not control for teacher experience (though the percentile ranks are calculated within experience groups). My OLS regression models control for teacher experience (categories), grade (4-8) and, of course, whether the teacher works at a charter school (teachers with "unknown" experience are excluded). Grade is already in the city's model, and so including it in mine doesn't appreciably affect the results.

** One might argue that charters participating in the program tend to be those with less well-developed evaluation systems of their own, and might thus include schools that are less well-established. Eyeballing the list of participating schools in 2010, this does not appear to be the case. Almost half the 2010 charter sample consists of teachers in either the Harlem Children’s Zone or the city’s KIPP schools, which are among the most highly-regarded charters in the city.

*** I present results for these models separately by year and subject because it’s easier to understand and interpret, and to illustrate the variation in the results between years. Also, combining across years means that many regular public school teachers would be counted twice. I did, however, run these models (fitting year dummies), and the conclusions are substantively the same.

My name is Ben Chapman and I wrote the article. Speaking of bad research and bad journalism, don't you think it's a little silly to write this critique of my piece without even seeing the graphic? Or contacting the author? Instead doing any reporting, you wrote about what you "imagine." Next time you write about one of my pieces drop me a line. I'm easy to find.

Hi Ben,

Thanks for your comment. Fair enough, but the primary point of the post is that you could not have possibly drawn the conclusions you did based on the data released by the city, no matter how you presented it. In addition, the article does state the findings - i.e., "a higher percentage of better teachers than regular public schools" - which is why I felt I could infer what's in the graphic.

That said, I should have asked to see it just for the sake of due diligence, and I apologize for that.

MD

NOTE TO READERS: Ben Chapman has provided a text version of the graphics that were presented in the article, but do not currently show up in the online version.

Sidebar:

PARTICIPATING CHARTERS

lAchievement First-Bushwick Charter School

lAchievement First -Crown Heights Charter School

lAchievement First -East New York School

lAchievement First -Endeavor Charter School

lBedford-Stuyvesant -Collegiate Charter School

lBeginning With Children -Charter School

lBronx Charter School for Arts

lBronx Prep Charter School

lBrooklyn Prospect -Charter School

lBrownsville Collegiate -Charter School

lCommunity -Partnership Charter

lConey Island Preparatory -Public Charter School

lDemocracy Prep -Charter School

lExcellence Charter School of Bedford-Stuyvesant

lFamily Life Academy -Charter School

lFuture Leaders Institute -Charter School

lGirls Preparatory Charter School of New York

lHARBOR Sciences and Arts Charter School

lHarlem Children's Zone/Promise Academy Charter School

lHarlem Children's Zone/-Promise Academy II

lHellenic Classical -Charter School

lKIPP Academy -Charter School

lKIPP AMP Charter School

lKIPP Infinity Charter School

lKIPP S.T.A.R. Charter School

lLeadership Prep Charter School

lThe Renaissance -Charter School

lSisulu-Walker Charter School

lThe Bronx Lighthouse Charter School

lThe Equality Charter School

lThe Equity Project Charter School (TEP)

lWilliamsburg -Collegiate -Charter School

Graphic: Inside the numbers: public vs. charter

Take a look at how public school and some charter school teachers were ranked in the 2009-2010 school year:

Public Schools

HIGH 906 5%

ABOVE AVERAGE 3,457 19%

AVERAGE 8,981 50%

BELOW AVERAGE 3,593 20%

LOW 899 5%

TOTAL 17,836

Charter Schools

HIGH 14 12%

ABOVE AVERAGE 30 26%

AVERAGE 39 34%

BELOW AVERAGE 26 23%

LOW 5 4%

TOTAL 114

Source: Department of Education

*Charter schools are not required to report attendance data, teacher experience level and other data used to determine the rankings.

I'm confused -- doesn't that match what you assumed it would contain? There is an acknowledgement of sorts in this 'graphic' that the charter school data do not include all teachers/schools. But if the issue of bias is never addressed, what is Mr. Chapman objecting to?