Michelle Rhee's Testing Legacy: An Open Question

** Also posted here on “Valerie Strauss’ Answer Sheet” in the Washington Post.

Michelle Rhee’s resignation and departure have, predictably, provoked a flurry of conflicting reactions. Yet virtually all of them, from opponents and supporters alike, seem to assume that her tenure at the helm of the D.C. Public Schools (DCPS) helped to boost student test scores dramatically. She and D.C. Mayor Adrian Fenty made similar claims themselves in the Wall Street Journal (WSJ) just last week.

Hardly anybody, regardless of their opinion about Michelle Rhee, thinks that test scores alone are an adequate indicator of student success. But, in no small part because of her own emphasis on them, that is how this debate has unfolded. Her aim was to raise scores and, with few exceptions (also here and here), even those who objected to her “abrasive” style and controversial policies seem to believe that she succeeded wildly in the testing area.

This conclusion is premature. A review of the record shows that Michelle Rhee’s test score “legacy” is an open question.

There are three main points to consider:

- First, (by Rhee’s own admission) two simple policy changes enacted in 2007 were made, in part, to generate artificial test score gains during her first year (when roughly 75 percent of the DC-CAS increases occurred).

- Second, the district’s DC-CAS test was introduced in 2006, and a year or two after any new test is introduced – as students, teachers, and administrators become more familiar with it – it’s common to see an artificial inflation in scores. The beginning of Rhee’s tenure coincided with this period.

- Third, the students enrolled in DC public schools in 2010 were a significantly different group compared with the students of 2007, and this demographic shift may have driven some of the improvement in DC-CAS performance. A deeper look at the best evidence we have – from the National Assessment of Education Progress (NAEP) – suggests that the increases in D.C.’s average NAEP scores between 2007 and 2009 (widely touted by Rhee and her supporters as confirmation of her effectiveness) could, in part, be a result of this demographic change. Math increases may be somewhat overstated, while reading scores may have been flat.

In a July 2009 Washington Post article, Bill Turque reported that, shortly after Rhee started, she made two bookkeeping changes that she knew would inflate performance during her first year, providing some invaluable political breathing room (she called some of these changes "low-hanging fruit"). First, she began enforcing a previously-unenforced policy that said high school students had to have enough credits to take the tests. And second, she changed the way that students who don’t take the tests are recorded in the data (they were previously counted as failing, but Rhee changed the policy to exclude them from the data entirely). Both changes resulted in groups of students being excluded from the data/test starting in 2008, but then results were compared with 2007, when both groups were included (the high schoolers without enough credits were almost certainly relatively low-scorers, on average, while the non-takers were previously counted as failing). These changes may have made sense for other reasons, but the fact that they inflated performance remains a serious problem when assessing DC-CAS results during Rhee’s tenure.

Without more data and details from DCPS, it is difficult to know how much these changes influenced the results.

We do know two things, however: First, because all year-to-year comparisons after 2008 would use consistent policies, the policy changes above would act to inflate results in 2008 only. And second, the vast majority of overall DC-CAS “gains” under Rhee occurred in 2008: 82 percent of the net increase in reading proficiency and 65 percent of the net increase in math proficiency occurred that year.

The new DC-CAS test and the “adjustment period."

The DC-CAS assessment system was introduced in 2006 by Clifford Janey, Rhee’s predecessor. (Full disclosure: Janey is a member of the Shanker Institute’s board of directors.) There is a good deal of evidence (here, here, and here, for example) that tests scores tend to falter or stagnate for the first year or two after a new testing system is introduced. This is usually followed by large score increases, after students, teachers, and administrators become more familiar with the format and content of the new exam.

D.C. test results followed this pattern exactly.

There is no way to know precisely how much of the score increases were due to the expected “adjustment effect” from the new test. But it's safe to assume that some of the gains were. In the Post article discussed above, Rhee seemed to acknowledge this, saying that increases after 2009 would “absolutely” be real progress stemming from her reforms, implying that the previous years’ results might have been affected by the new policies. Talking about the first year during which the real benefits would show up (2010), Rhee said, “I’m very excited about next year."

The 2010 DC-CAS results were largely flat.

DCPS students are different now compared with 2007.

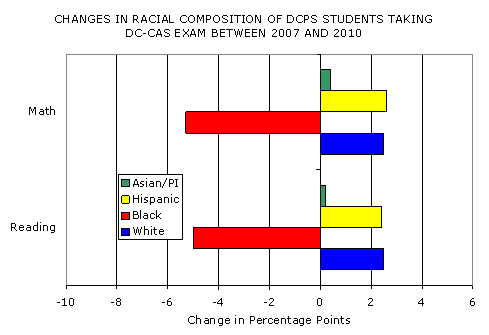

Finally, the student population of DCPS changed rather dramatically between 2007 and 2010, with a large outflow of black students (the poorest and thus lowest-performing subgroup) and a corresponding rise in the proportions of students who are white, Hispanic, and Asian. When you compare 2007 performance with that in later years, you are comparing unusually different groups of students, demographically speaking (like DC-CAS, most district data require "cohort-to-cohort" comparisons [e.g., elementary students one year compared with those the following year], but the overall demographic composition of students for an entire district tends to stay relatively stable over short periods of time). In the graph below, the somewhat unusual shift is clear.

[Note: Although this trend likely signals a net outflow of impoverished students from the district (black students have the highest poverty rates), there was no corresponding decline in the overall percentage of “economically disadvantaged” test takers. Indeed, there was an increase, from 62 percent in 2007 to 70 percent in 2010. It’s a safe assumption, though, that the recession was a factor in this shift. In other words, it seems likely that the increase in economically disadvantaged students was due to the reclassification of many of 2007’s “non-disadvantaged” students as “disadvantaged” betweeen 2008 and 2010. A student's race does not change over time, which means that the graph above reflects a churn of students, not classifications. Still - the outgoing students may have been relatively high performers (and/or not economically disadvantaged). These types of issues are always present when interpreting changes in cross-sectional data.]

We can’t yet tell how much this demographic shift inflated aggregate performance, but it’s entirely possible that it did, at least to some extent.

The evidence from NAEP sheds a little light on the demographics issue. As an alternative to DC-CAS, DCPS frequently touts the results of the National Assessment of Educational Progress (NAEP) as evidence of their reforms’ ”success” (though most of Rhee’s signature reforms, such as the WTU contract and IMPACT evaluation system, went into effect this year, and so had no effect on NAEP [or DC-CAS for that matter]).

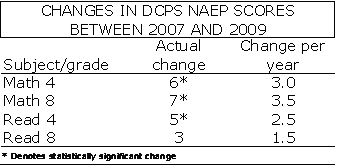

A quick summary of the NAEP results for DCPS (public, non-charter schools) is presented in the table below.

Although eighth grade reading scores showed no statistically significant increase (i.e., they were flat), the gains in fourth grade reading and in math in both fourth and eighth grade appear to be impressive (though the NAEP increases had been occurring for several years under Rhee's predecessors).

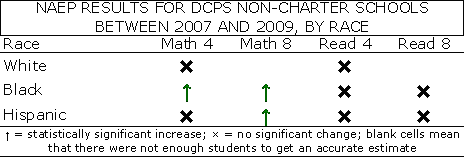

To get a rough idea of whether these improvements were real – or were, at least partially, a result of the change in cohort demographics (the shift was even stronger among NAEP test-takers) – we can check the changes in average scores for different subgroups. The simplified breakdowns by race are presented in the table below. Note that a separate breakdown for white eighth graders is not available in either subject, since there weren’t enough of them to get an accurate estimate (the same goes for Asian/Pacific Islander students in all grades/subjects).

As you can see, despite the Rhee/Fenty WSJ claim that “every student subgroup raised its performance," the results were actually mixed. Although there were significant increases among black students in both fourth and eighth grade math, there were no discernible increases in reading for either grade. This is unsurprising in regard to eighth grade reading, where the overall results were also flat, but troubling in regard to fourth grade reading, where the widely touted overall gains are not shared by any subgroup. There was, however, a significant increase in NAEP scores among low-income students between 2007 and 2009. This increase is noteworthy (black students tend to score lower because of poverty, parents' education, and other non-racial factors), but it too may in part be a byproduct of the severe recession, with students who would have been above the low-income cutoff in 2007 coming in below it in 2009. NAEP (and published DC-CAS results) doesn't follow individual students over time, so it is tough to untangle all of these factors.

As a result, there is no way to know the role that demography played in these results. But any time there are overall increases between cohorts, but none among any of the major racial subgroups, this is a red flag.

So, this is very tentative evidence that at least the fourth grade reading increases between 2007 and 2009 may have been, to some degree, artificial. On the other hand, the significant math increases among black students suggest that the overall math changes are real, though they may be somewhat overstated in the overall results, particularly for fourth grade (where scores for white and Hispanic students did not increase by a statistically significant margin).

*** Let’s quickly summarize. Three factors – surprisingly rapid demographic changes in DCPS students, a new state exam, and two changes to policies regarding who gets tested and how non-takers are accounted for in the data – are certain to have generated artificial increases in DC-CAS performance, even if we don’t know the extent.

We also know that this inflation was likely to have been especially strong in 2008 (when the majority of increases did occur), and especially weak in 2010 (when there were negligible increases and even decreases for some groups).

Finally, the same demographic changes that may have inflated the DC-CAS scores could have had the same effect on DC NAEP reading scores. The statistically significant increases in the NAEP math scores are also possibly overstated (though certainly still positive), due to this demographic change.

To all of this, add the fact that we don’t have any actual test score data from DC-CAS, and must rely on completely inadequate proficiency rates to assess performance (our only measure for students not in 4th or 8th grade, and for the years 2008 and 2010, when NAEP was not administered), and that, to my knowledge, there has not been a single even remotely sophisticated analysis of recent DCPS performance by an independent researcher with access to good data (an analysis, I might add, which could address many of the above issues).

So, it is fair to say that there are likely to have been some gains in achievement under Michelle Rhee’s tenure, but they were probably not dramatic, and certainly not unambiguous. The facts presented above strongly suggest that we should all sit back and wait for a more rigorous analysis of DCPS data before we issue any proclamations about Michelle Rhee’s testing “legacy."

Matt,

Thanks for such a handy a clear description.

As I recall, the only discernable improvements in NAEP 8th grade Reading, which I see as the most valuable metric, was in the top decile. That lends more support for your evidence that gentrification may be the explanation, but regardless, no improvements were made in the learning necessary for struggling students to make it in high school.

Secondly, I'd like your thoughts on the NAEP sample. If my district (which is 90% poor) was judged on a tested sample which was 70 something percent low income, it would look like we were working miracles. I'm no expert, but before NCLB, it looked like the D.C. NAEP samples were more respresentative of their population.

Thanks much for this thoughtful and in-depth review Matthew.

A value-added analysis certainly would provide additional clarity to this question and help us get an estimate of how much progress students are making. These estimates can provide powerful and accurate insight at the district and school levels, rather than pure attainment looks at the data which we know are heavily confounded by economic condition.

Appreciate your article here.

Jason Glass

Columbus OH

Thanks for your comment, John. I am confident that the NAEP sample is representative of the DCPS student population, both now and in the past. It is possible, however, that DCPS students are, as a group, becoming less reflective of all school-aged children in the District. Selection into charter schools would probably explain most of this trend, as well as, perhaps, a change in who opts for area private schools. Thanks again, MD

Matthew,

i'm glad to hear that about the NAEP sample. Also, that just strengthens your argument.