Multiple Measures And Singular Conclusions In A Twin City

A few weeks ago, the Minneapolis Star Tribune published teacher evaluation results for the district’s public school teachers in 2013-14. This decision generated a fair amount of controversy, but it’s worth noting that the Tribune, unlike the Los Angeles Times and New York City newspapers a few years ago, did not publish scores for individual teachers, only totals by school.

The data once again provide an opportunity to take a look at how results vary by student characteristics. This was indeed the focus of the Tribune’s story, which included the following headline: “Minneapolis’ worst teachers are in the poorest schools, data show." These types of conclusions, which simply take the results of new evaluations at face value, have characterized the discussion since the first new systems came online. Though understandable, they are also frustrating and a potential impediment to the policy process. At this early point, “the city’s teachers with the lowest evaluation ratings” is not the same thing as “the city’s worst teachers." Actually, as discussed in a previous post, the systematic variation in evaluation results by student characteristics, which the Tribune uses to draw conclusions about the distribution of the city’s “worst teachers," could just as easily be viewed as one of the many ways that one might assess the properties and even the validity of those results.

So, while there are no clear-cut "right" or "wrong" answers here, let’s take a quick look at the data and what they might tell us.

From what I can gather, the Tribune (or, perhaps, the district) assigned a rating to each school based, I assume, on the average performance of its teachers on a given component (this entails the loss of much data, and might cause confusion among those who conflate teacher evaluation outcomes with school effectiveness in general, but it's fine for our purposes here). They published the schoolwide totals in a map, which we might recast, using the underlying data, in order to get a slightly better sense of the overall relationship between teacher ratings and the characteristics of the students they teach.

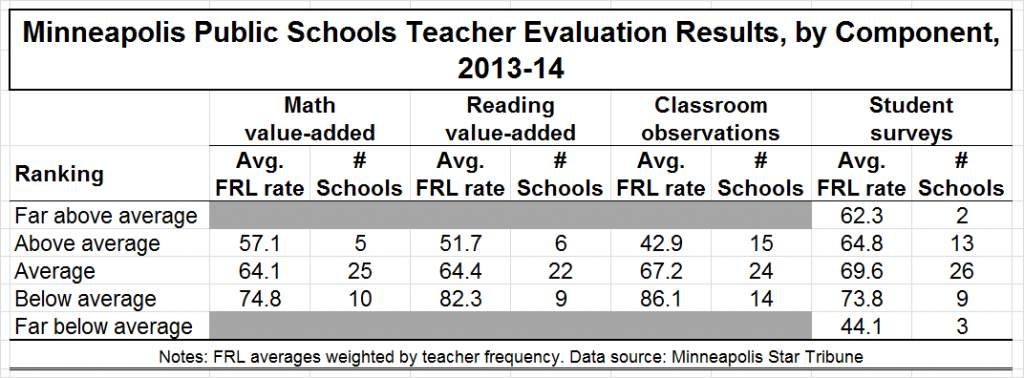

The simple table below tells you the average free/reduced-price lunch (FRL) rate, a rough proxy for poverty/income, for the schools falling into each rating category, with totals presented separately for each component of the evaluation system: classroom observations; reading value-added; math value-added; and student survey scores.

So, for example, among the five schools whose teachers, as a group, scored “above average” on the math value-added component, the (weighted) average poverty (FRL) rate for these schools was 57.1 percent. For the schools with teachers who scored “average” on the math value-added component, the FRL rate was 64.1, and it was 74.8 percent for the schools with “below average” ratings. Teachers in schools with higher poverty rates scored lower on the math value-added component than their colleagues in schools serving lower proportions of low-income students.

And the same basic conclusion applies to each of the other three measures – average poverty rates increase as evaluation outcomes decrease (the observations component, judging by what I was able to download, is the only one for which actual average scores are available, and the correlation between these schoolwide scores and FRL is -0.61). The sole exception is the schools scoring “far below average” on the student component, but this may be due to the fact that there are only three schools in this group, and one of them has a very low FRL rate.

Overall, then, the results of Minneapolis’ teacher evaluation system exhibit a fairly clear relationship with student poverty/income. This is what we're seeing in most other states and districts with new systems.

When it comes to understanding what these discrepancies mean, it bears reiterating that it is very difficult to separate the “real” differences from any possible “bias." In other words, it may be the case that lower-performing teachers are, in fact, disproportionately located in higher-poverty schools, as these schools often have problems with recruitment, turnover, resources, etc. On the other hand, it may be that one or more of the system’s constituent measures is biased systematically against teachers in poorer schools. Or it may be some combination of these two general factors. (Note that the evidence of a relationship between all four components and FRL suggests that, if there is a problem per se, it is not limited to just one or two components.)

In any case, the Tribune’s headline, and the main thrust of its story, essentially assumes away all this complexity, and takes the ratings at face value (i.e., the “worst” teachers are those who received low ratings, with no attention at all to the possibility that the measurement is driving this relationship).

As the results of new teacher evaluations are released all over the nation, the first step must be examining the data – e.g., checking the relationships between components, within components over time, and whether they vary systematically by student characteristics. If, for example, evaluation ratings vary substantially by student income or other traits, this can carry implications for teacher recruitment, retention, transfers, morale, and other important outcomes (see here for a discussion of this issue). In some cases, officials might want to consider adjusting their models/protocols to “level the playing field."

To be clear, this is a tough situation, and there are often no real correct answers, only trade-offs. Districts (with input from teachers and others) will have to decide whether action is needed on a case-by-case, measure-by-measure basis. At the very least, however, officials, reporters and the public in general should avoid assuming that the results of these brand new systems, which have never been tried before, are telling us exactly what they’re supposed to be telling us right out of the gate.

- Matt Di Carlo

"the Tribune’s headline, and the main thrust of its story, essentially assumes away all this complexity"

Didn't you just define "newspaper"? :)

Good post.