A Below Basic Understanding Of Proficiency

Given our extreme reliance on test scores as measures of educational success and failure, I'm sorry I have to make this point: proficiency rates are not test scores, and changes in proficiency rates do not necessarily tell us much about changes in test scores.

Yet, for example, in the Washington Post editorial about the latest test results from the District of Columbia Public Schools, at no fewer than seven different points (in a 450 word piece) do they refer to proficiency rates (and changes in these rates) as "scores." This is only one example of many.

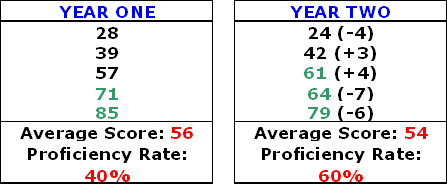

So, what's the problem? Let's look at a very simple example. Say we have a hypothetical class of five students who take a test, and they are compared to the five students who take the same test the next year. The "proficient" cutoff score is 60.

In year one, the proficiency rate was 40 percent (two kids made it), and the average score was 56. In year two, the proficiency rate went up to 60 percent, which might be seen as a massive improvement. But three of five students scored lower than their predecessors, and the average test score actually declined.

So, it is entirely possible that proficiency rates decreased in DCPS while the average score increased, and vice-versa. It is also possible that proficiency rates can go up and down while the average score remains stable. The same goes for changes in rates of students who fall into the other common categories, such as below basic, basic, and advanced.

It's bad enough that we don’t usually follow students over time when we assess progress, and that most of the year-to-year changes in districts' test scores are often little more than random fluctuation. But changes in the percentage of students scoring proficient (and basic, below basic, etc.) are a particularly poor measure of progress.

So, let's not mistake proficiency or other rates for scores, especially when we are looking at year-to-year changes in these rates. They may look like the kind of 0-100 percent scores we all got in school, but rates are actually one big step removed. Calling them scores is misleading and inaccurate.

Average scores, on the other hand, while inadequate themselves, are probably more meaningful descriptive statistics when we're assessing school performance. As with proficiency rates, they do not (usually) follow students over time, so they too are snapshots that don't allow us see how students progress (or fail to progress) as they move through school. But unlike proficiency rates, averages at least represent a summary statistic that speaks to the "typical" student, not just those above or below a cutoff score (one that is often arbitrary, by the way). In districts' or schools' "progress reports," changes in the average score should accompany changes in proficiency rates.

But there is a good reason why nobody was able to discuss average DC student scores along with the proficiency rates: DCPS does not seem to provide average scores (not that I could find). These data are definitely available to DCPS, as they are used to construct the proficiency rates. Other districts provide them. They should be public information.

This report requires that we have distinguished between scaled scores within a proficiency level. Since most of these tests are calibrated based upon the proficiency cut offs and not calibrated so that scaled scores are equalized from year to year, a straight comparison of means is pretty meaningless. The scale is norm-referenced and comparing results contained between those norms do not have consistent interpretations from year to year.

In short, while a lot of work is being done to ensure that a 3 one year is the same as a 3 the next year, there is very little work on most of these tests to ensure that a 340 one year is a 340 the next year. That's one of the major difficulties with generating student-level growth without going to a more abstract level and it's also a big part of the motivation behind generating new tests that are more sensitive to growth.

Thanks for the comment, Jason

Thanks for the comment, Jason. My understanding of DC-CAS is that the scale scores are comparable (with the usual caveats) within grades/subjects (though not over the entire DCPS population). Even if my impression is incorrect, as you suggest, there are ways to standardize the scores across grade/subject/cutpoints. Given the limitations of rate changes as a cross-sectional measure of progress, as well as the overhyped attention paid to DCPS testing results, I should think that noisy averages are better than none. (As you may know, standardized scores are used for the calculation of DCPS value-added estimates, but I thought I read somewhere that that was to address differences in score dispersion between subjects.) One other idea would be school- and grade-level value-added estimates. Many districts report these publicly, and DCPS might do the same. Thanks again, MD