What You Need To Know About Misleading Education Graphs, In Two Graphs

There’s no reason why insisting on proper causal inference can’t be fun.

A weeks ago, ASCD published a policy brief (thanks to Chad Aldeman for flagging it), the purpose of which is to argue that it is “grossly misleading” to make a “direct connection” between nations’ test scores and their economic strength.

On the one hand, it’s implausible to assert that better educated nations aren’t stronger economically. On the other hand, I can certainly respect the argument that test scores are an imperfect, incomplete measure, and the doomsday rhetoric can sometimes get out of control.

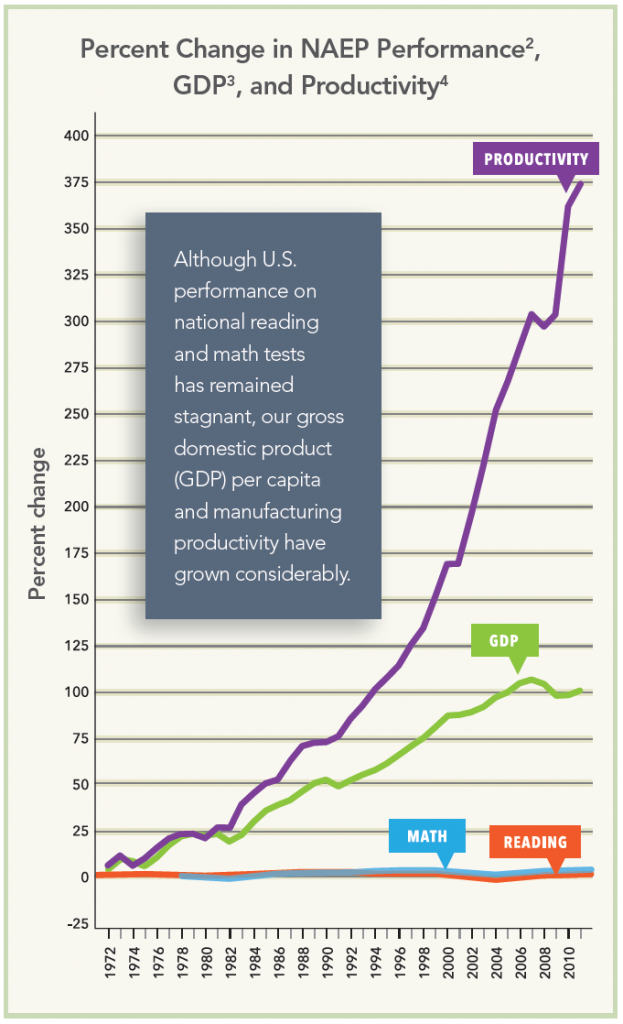

In any case, though, the primary piece of evidence put forth in the brief was the eye-catching graph below, which presented trends in NAEP versus those in U.S. GDP and productivity.

I won’t get into the details of why this is a poor presentation of data (for example, the “percent change” axis, which is not appropriate for and totally obscures changes in NAEP scale scores, while pumping them up visually for productivity and GDP). Perhaps more importantly, the brief is supposed to address the argument that "stagnant assessment scores foretell foretell an impending economic decline and threaten the nation's global competitiveness." The graph above, it says, shows that this is "simply not the case." This is, of course, a wholly inappropriate argument – i.e., that the simple coincidence of two trends (or lack thereof) is sufficient evidence for causal statements about the relationship between them.

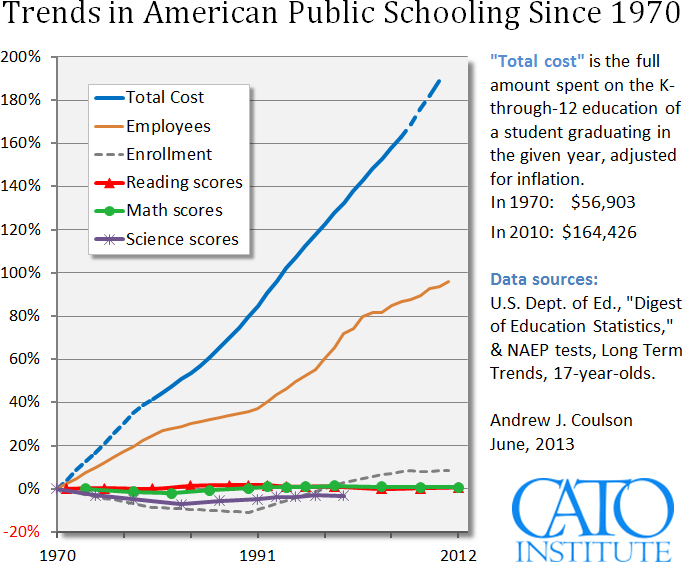

Morgan Polikoff was quick to note that the ASCD graph was “eerily reminiscent” of a figure from a rather different source -- the Cato Institute -- making a rather different argument.

This graph has many of the same measurement issues (e.g., see here and here), and takes essentially the same approach as in the ASCD figure – comparing changes in NAEP with other indicators, in this case K-12 spending and the number of education employees, in order to make a causal pseudo-argument. This time, however, the implied direction of causality is reversed – instead of making an argument about whether testing results have any influence (e.g., on productivity/GDP), the idea here is that spending and hiring do not influence testing results.

If we have a little fun and take these two figures at face value, we might conclude that key inputs such as spending have no effect on (testing) outcomes, which in turn have no effect on economic performance. The policy implications of this story are, to put it mildly, frustrating.

To have even more fun, and to illustrate further the severe limitations of this approach, one could create a hybrid of these two graphs, in which rising education spending and hiring are presented alongside increasing GDP and productivity, with the "conclusion" being that education spending and hiring leads directly to economic growth/productivity. Alternatively, we might juxtapose the flat trend in 17-year old Long Term NAEP scores with a similarly stagnant measure such as real wages (i.e., flat test scores "cause" flat wages). But we'd be no closer to understanding the situation.

Look, there is, of course, more than a grain of truth in the (almost entirely obscured) nuanced version of both graphs' underlying arguments. For example, as noted above, there is a case to be made that test scores are a highly imperfect proxy for the countless factors that contribute to GDP and productivity (and, by the way, GDP and productivity are themselves imperfect proxies for economic performance). Similarly, the evidence is clear that adequate funding for schools is a necessary but still insufficient condition for improving student performance, and that how schools spend money is as important as how much they spend.

But that's what makes oversimplified, sensationalist graphs like these so exasperating. Instead of promoting a discussion about finding better ways to spend money or the importance of tracking and understanding the factors that influence growth and productivity, these graphs seem intended to start a conversation by ending it, right at the outset, in a manner that typically is compelling only to those who already agree with the conclusions.

- Matt Di Carlo

Far from wanting to shut down the conversation about American education trends, I'm pleased that this chart regularly sparks such conversations. I respond to Matt and the other critics he links to here: http://www.cato.org/blog/addressing-critics-purportedly-no-good-very-ba…

I would have liked to have seen the Cato chart with the "total cost" broken down into "teacher salaries" and "other". My guess is that salaries would appear to pretty closely track test scores, while the "wasted" increase in expense would overwhelmingly be in "other" - buildings, computers, administrators, etc.