Who's Afraid of Virginia's Proficiency Targets?

The accountability provisions in Virginia’s original application for “ESEA flexibility” (or "waiver") have received a great deal of criticism (see here, here, here and here). Most of this criticism focused on the Commonwealth's expectation levels, as described in “annual measurable objectives” (AMOs) – i.e., the statewide proficiency rates that its students are expected to achieve at the completion of each of the next five years, with separate targets established for subgroups such as those defined by race (black, Hispanic, Asian, white), income (subsidized lunch eligibility), limited English proficiency (LEP), and special education.

Last week, in response to the criticism, Virginia agreed to amend its application, and it’s not yet clear how specifically they will calculate the new rates (only that lower-performing subgroups will be expected to make faster progress).

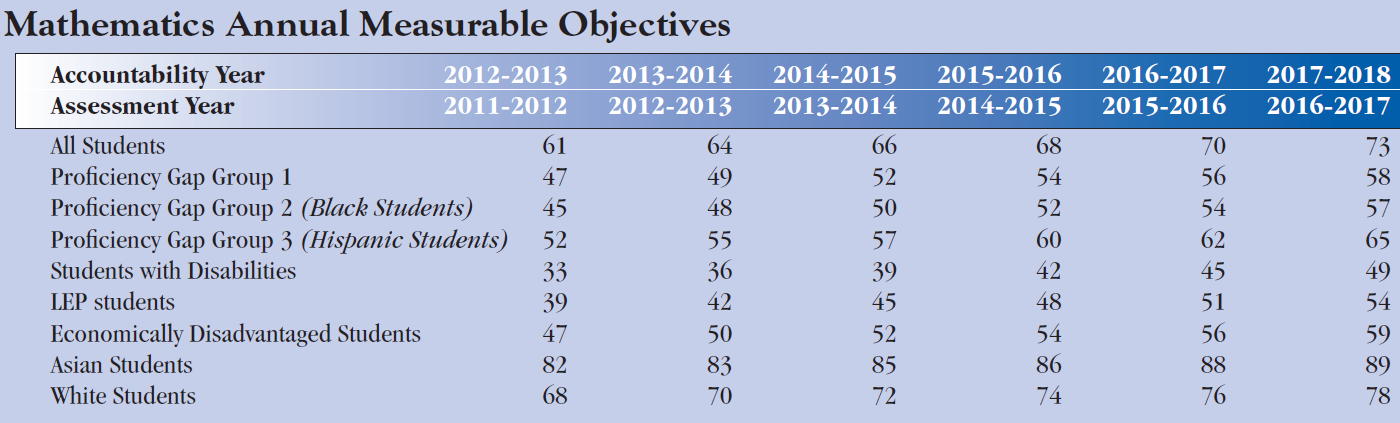

In the meantime, I think it’s useful to review a few of the main criticisms that have been made over the past week or two and what they mean. The actual table containing the AMOs is pasted below (for math only; reading AMOs will be released after this year, since there’s a new test).

Note: “Proficiency Gap Group 1” includes special education, LEP and economically disadvantaged students.

The targets are too low (absolute performance edition). As you can see in the table above, by 2017, all students are expected to reach 73 percent math proficiency, African-American students ("Gap Group 2") 57 percent, Hispanic students ("Gap Group 3") 65 percent, students with disabilities 49 percent, etc. These targets were widely criticized as too low.

I don’t know what the “proper” expectations should look like, but I do know that these, at least at the statewide level, were expectations for changes in various proficiency rates, not their end points. In other words, each one of these target AMOs – the proficiency rates expected in 2017 – is the cumulative result of five years of annual expectations for “growth” (it’s not really growth, but that’s a different story), with the starting point (2011-12) representing the current rate (overall, and for each subgroup).

AMOs are forward-looking. It may be an appealing argument to say that 50-60 percent proficiency is a low target, but that really depends on where you’re starting from, especially given that this is a five-year plan. In other words, you can only criticize the statewide targets for being too low insofar as the expected “growth” is too slow, whether overall or for specific subgroups.

For individual schools, however, the interpretation is different, since they are being expected to meet the benchmarks in the table above, no matter where they start out from. This means that any assessment of the targets' rigor will depend, in no small part, on their current proficiency rates.

For some schools (e.g., those with already-high rates), these expectations are without question way too low, but that’s because the crudely-calculated AMOs, in Viriginia and elsewhere, don't take each school's initial "distance" from the target into account. This is discussed further below, but for now, it bears mentioning that all the discussion of Virginia's plan has focused on the statewide targets, when it's actually individual schools that will have to meet them.

The targets won’t close achievement gaps. This criticism, for example as put forth by Kris Amundson, is fair enough. Ms. Amundson focuses on the “growth” rates in the table above, and notes that all subgroups (by race, income, etc.) are expected to make roughly the same amount of “progress” between now and 2017. This does not reflect an emphasis on closing these gaps, since even if the targets are met, the end gaps will basically be the same (though they actually would have decreased as a proportion, and, in either case, proficiency rates are an absolutely terrible way to measure trends in achievement gaps).

(Side note: There’s a reason why the AMOs don’t close gaps between demographic groups. They're calculated to reflect the gaps between high- and low-scoring students/schools within these groups [see the first footnote].*)

The basic argument here is that we should expect all children to achieve at an acceptable level (e.g., proficient), regardless of their characteristics or background. I think that’s a strong argument – persistent achievement gaps, whether defined by race/ethnicity, income, or any other characteristics, are very important social measures. So long as they exist, we know that equality of opportunity has not been achieved.

But, there’s also a tension here, one that is common in U.S. school accountability systems. And it is the fact that narrowing achievement gaps necessarily implies that you expect lower-scoring subgroups (e.g., low-income students) to progress much more quickly than their more advantaged peers.

This is feasible only if policymakers are willing to commit to an infusion of new resources, targeted only for poor, struggling students and not their middle-class peers. Andy Rotherham hits on this point when he says, “Virginia could set common targets that assume minority and poor students can pass state tests at the same rates as others and at the same time provide substantially more support to these students and their schools."

This is important. If Virginia or any other state is not prepared to provide the means by which schools can compel big gains from their lower-scoring students, then it’s not rational policy to hold them accountable for doing so. In this sense, the important question is as much about whether states are serious in their commitment to achieving the goals as about the goals themselves. You can't really assess one without the other.**

The targets are too low (“growth” edition). Implied in both of the previously-discussed criticisms, particularly the first one, is the idea that Virginia’s expectations for “growth” in proficiency are too low.

In general, Virginia is shooting for a 10-17 percentage point increase in statewide math proficiency over the next five years (the expectations vary slightly by subgroup). Contrasted with some other states’ target increases, and certainly with the lofty goals of NCLB, this may sound low.

And perhaps they are low – I don’t know what the “proper” targets should be. But let's think about this for a second, and in doing so, let's put aside the issues with proficiency rate changes, as well as the fact that individual schools will have to make very different rates of progress to meet the benchmarks. Instead, let's look at the statewide targets as if they were actually growth expectations (i.e., schools need to pick up a certain number of points, rather than reach benchmarks set based on statewide averages), and take them at face value.

You might say that, put simply, a 10-17 percentage point increase in proficiency represents a 10-17 percentage point increase in student performance over a five year period.

True progress is slow and sustained, especially at the state-level. I'm not sure that a 10-17 percentage point increase over five years, if it’s “real” (and that's a huge "if"), should necessarily receive a knee-jerk "too low" reaction.

Moreover, for many of these subgroups, that increase is rather huge relative to current proficiency rates. For instance, in 2011-12, only 33 percent of students with disabilities were considered to be proficient in math. The original Virginia plan (the one they’re now amending) was aiming for 49 percent in 2017. That is a 50 percent increase in the rate, which is a very large proportional change over a relatively short period of time. And there are 20-30 percent expected increases for most of the subgroups in the table.

Again, I can’t say whether these goals should be higher (especially since opposing “high expectations” doesn't win one many friends, and one of the purposes of accountability systems is to set very high expectations as a motivational tool of sorts). But it's good to see that some states, at least, are paying attention to realistic expectations, even if they are doing so in an ill-considered manner.

***

For me, however, the big problems with Virginia’s plan precede issues pertaining to subgroup breakdowns or the proper calibration of statewide expectations. And they are not specific to Virginia, but rather spelled out by the guidelines for NCLB waivers.

Specifically, I'm more concerned about the previously-mentioned issue of setting absolute performance targets for individual schools based on statewide baselines, as well as the severe limitations of proficiency rate changes, especially when measuring gap trends.

The statewide proficiency targets are being applied to each individual school – for example, any school that doesn’t have a proficiency rate of 73 percent in 2017 will have failed to meet state expectations, no matter where they started out this year. Many schools, particularly those with proficiency rates that are currently well below average, whether across all students or for specific subgroups, will fall short even if they manage to increase their rates even more rapidly than required by the statewide targets. Conversely, schools with rates that are already quite high will be judged effective even if their students exhibit no improvement.***

Granted, there are few high-stakes consequences attached to the AMOs, and there are plenty of interventions that might be usefully targeted based on AMO-style measures. But if we really wanted to set rigorous objectives for which schools would be held accountable, whether in Virginia or elsewhere, we would focus on using growth-oriented measures that actually gauge the quality of instruction that schools provide, and we would also stop relying on changes in cross-sectional proficiency rates, which are, in my view, too flawed to play such a prominent role in any high-stakes accountability system.****

In this sense, the prior problem is the choice of measures, not how high or low they are set.

- Matt Di Carlo

*****

* Virginia’s (rather clumsy) method of calculating AMOs was not about closing gaps between subgroups, but rather within them. Each AMO was calculated by subtracting the proficiency rate of the 20th percentile school from that of the 90th percentile school (for each subgroup separately). The difference was then divided in half, and that was established as the expected increase in proficiency by 2017 (with roughly equal "progress" expected in each year before then). In other words, they’re not trying to close gaps between, say, white and black students, but rather between low-scoring (20th percentile) and high-scoring students/schools (90th percentile) within each group. Virginia seems to be claiming that their new math tests (first administered last year) didn't enable them to tell that their AMO calculation method would not close gaps. This is plausible if the 90th/20th percentile gaps were larger for lower-scoring subgroups under the old tests compared with the new, but it doesn't change the fact that the original formula was intended to close gaps within, not between groups.

** Virginia’s plan says that schools failing to meet AMOs must “develop and implement improvement plans to raise the achievement of student subgroups not meeting the annual benchmarks."

*** The waiver application says that schools can be given a kind of pass on meeting AMOs if they decrease their "failure rate" 10 percent in any given year (it's not clear whether this is percentage points or an actual percentage).

**** Like all states applying for waivers, Virginia has its own state-specific rating system, which is separate from AMOs, and does include a growth model portion (though it's given a rather low weight). But the controversy over Virginia's plan has focused entirely on the AMOs, as correctly noted here.

"If Virginia or any other state is not prepared to provide the means by which schools can compel big gains from their lower-scoring students, then it’s not rational policy to hold them accountable for doing so." I think your assumption that these are rational systems designed by rational people is incorrect. Of course, you know that given the extremely insightful analyses you have done over the past year. If only policymakers would read your blog. But creating a rational, fair accountability system does not make for great sound bytes on the election trail.