The Stability And Fairness Of New York City's School Ratings

New York City has just released the new round of results from its school rating system (they're called “progress reports"). It relies considerably more on student growth (60 out of 100 points) than absolute performance (25 points), and there are efforts to partially adjust most of the measures via peer group comparisons.*

All of this indicates that the city's system is more focused on school rather than student test-based performance, compared with many other systems around the U.S.

The ratings are high-stakes. Schools receiving low grades – a D or F in any given year, or a C for three consecutive years – enter a review process by which they might be closed. The number of schools meeting these criteria jumped considerably this year.

There is plenty of controversy to go around about the NYC ratings, much of it pertaining to two important features of the system. They’re worth discussing briefly, as they are also applicable to systems in other states.

One – There is, in practice, often a trade-off between test-based measures' stability and their association with student characteristics. NYC’s system is frequently criticized for being unstable – that is, schools get different grades between years. This argument is not without merit – for example, only 42 percent of schools received the same grade in 2012 as in 2011 (the year-to-year correlation in the actual scores, upon which the letter grades are based, is 0.48, which is usually interpreted as moderate).

It's important to note that one should not expect perfect stability. For one thing, schools do improve (or regress) in "real" performance between years, so some change would be expected from even a perfect system (conversely, even a completely random system would exhibit some degree of stability just by chance).

However, the primary reason for the instability is the system’s heavy reliance on growth model estimates. These measures fluctuate a great deal between years, especially in smaller schools. Some of this is "real" change, but most of it is imprecision.**

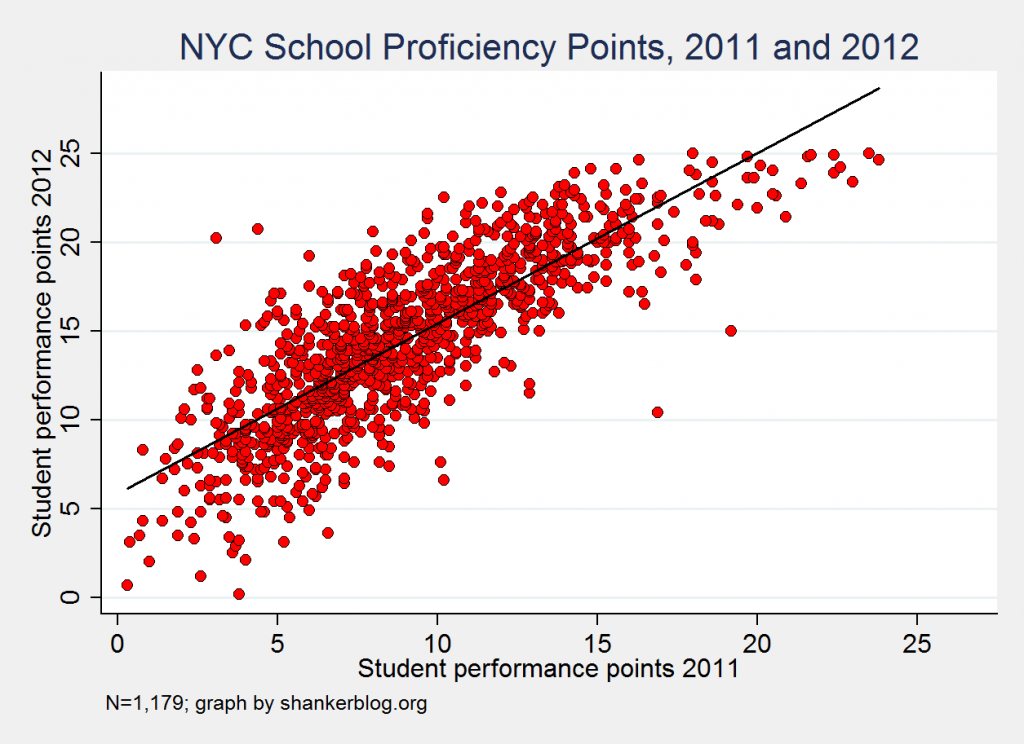

The other (non-growth) components are actually quite stable. For example, the scatterplot below presents the year-to-year relationship of schools’ absolute student performance scores (the number of points each school received out of 25), which are based on proficiency rates. (Note: In all analyses, above and below, the sample is limited to elementary, middle and combined K-8 schools, for a total of about 1,200.)

There is some fluctuation - the mean deviation is plus or minus 5.5 points - but the measure is quite stable overall (the correlation is 0.82, which is high).

The same goes for the school environment component (15 possible points), which is based on attendance, parent surveys, safety and other outcomes; the year-to-year correlation is 0.79.

The growth points, in contrast, are comparatively unstable (0.27). And since they represent (at least nominally) 60 percent of schools’ final scores, the final ratings fluctuate more (see our prior post for more on this issue in NYC, as well as additional discussion of the model that the city uses).

But the higher importance for growth scores has its advantages too – they may be actually telling you something about school effectiveness, and they therefore mitigate the degree to which schools’ final scores are dependent upon the characteristics of the students they serve.***

In several of the state school rating systems I’ve thus far reviewed, absolute performance is more heavily weighted, whether directly (e.g., Louisiana) or indirectly (e.g., Florida). As a result, in these states, schools serving smaller proportions of low-income, special education, etc., students are almost guaranteed a good score.

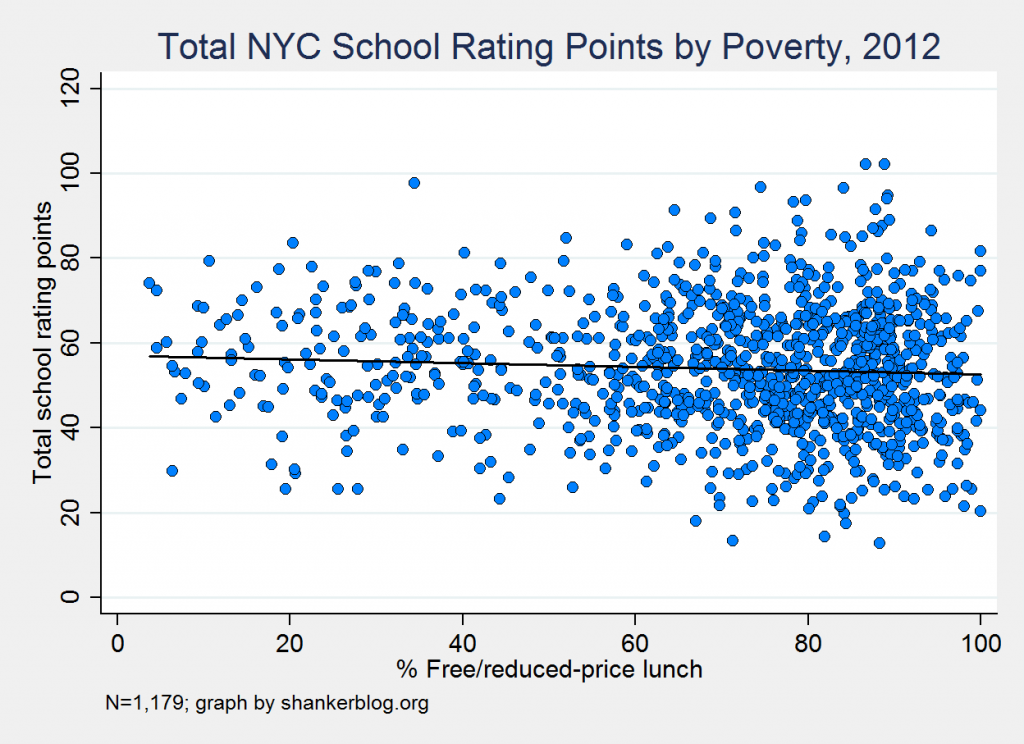

This is not the case in NYC, at least when looking at the actual scores and not the grades (the latter are discussed below). Just to give a visual idea of this weak association, the scatterplot presents schools’ final scores by poverty rates (as measured, albeit imperfect, by the percent of students eligible for subsidized lunch).

There’s virtually no discernible relationship here (the correlation coefficient is -.06, which is very low). And there is also only a very weak relationship between final scores and other characteristics, such as the proportion of students who are special education (and the proportion in “self-contained” classes).

In other words, schools serving different student populations, at least in terms of those characteristics that are measured directly, do not get systematically different scores (again, the grades are a somewhat different story, as discussed below).

This is a trade-off that all states (and districts) face in choosing the test-based measures for these systems. If they emphasize growth, ratings will tend to be less strongly associated with student characteristics but more unstable. If, on the other hand, absolute performance plays a starring role, the ratings will be more stable, but schools serving disadvantaged populations will tend to get lower scores no matter how much their students improve.

(Side note: Interestingly, this trade-off is not really seen with the non-test measure - the "environmental" component. It is both relatively stable and only weakly associated with student characteristics.)

Two – How scores are sorted into actual ratings matters. In NYC (and elsewhere), schools’ actual index scores are sorted into categories defined by letter grades (A-F), and this is how decisions are made. There are (potential) consequences for receiving low grades, and they also get a lot of media attention.

Schools receiving an F or D in any given year enter a kind of review process after which they might be closed, and the same goes for schools receiving three consecutive C’s.

Much criticism has befallen NYC for the manner in which they convert schools’ scores into these letter grades. Put simply, for elementary and middle schools, they impose a distribution on the scores – that is, the city assigns grades by sorting schools’ final scores, and then applying the following simple rule: the highest-scoring 25 percent receive A’s; the next highest scoring 35 percent get B’s; the next 30 percent get C’s; the next 7 percent D’s; and, finally, the lowest scoring 3 percent are given F’s.

In other words, by design, around 10 percent of NYC schools receive D’s or F’s every year, no matter how their actual scores turn out.

This strikes many people as unfair, since it seems like the city is attempting to ensure that a minimum number of schools are subject to closure review each year. I can't speak to the intentions of the people who made these decisions, but I would point out that the primary alternative – choosing fixed thresholds for each letter grade – is also arbitrary and subject to political considerations.

To me, however, the noteworthy feature about the sorting scheme is less the imposed distribution than the fact that some schools “get a pass” from the system’s lowest grades. More specifically, schools might receive a score low enough to warrant an F or D, but still not receive that grade.

There are two ways this can happen: first, schools that received an A last year can’t get any lower than a D this year, no matter what; and second, schools with math and ELA proficiency rates in the top third citywide cannot receive any lower than a C.

The first exception is almost never applied - "A to F" shifts are extremely uncommon, in part because around 40 percent of schools' grades are based on measures (absolute performance and "environment") that tend to remain stable between years.

The latter "exception," on the other hand, doesn't happen much, but it does occur, and it means that the schools with the highest absolute performance levels, which will also tend to be those serving fewer disadvantaged students, are unlikely to ever face risk of closure (they would need three C’s in a row, which, due in no small part to their high proficiency rates, is very rare). As would be expected, around two-thirds of the schools meeting the "top-third" proficiency criterion have poverty rates in the lowest quartile citywide, and virtually all of them are below the median.

Conversely, the schools with relatively higher proportions of hard-to-serve students end up being more overrepresented in the rating categories (D/F) to which punitive consequences are attached.

By my calculation, 14 schools were granted an “exception” this year – i.e., they should have gotten an F or D but did not. Of those, four escaped an F.****

That may not sound like a lot, but you have to remember that, by design, only 2-3 percent of NYC schools receive an F every year, which means that roughly one in six schools that would have received this failing grade were given a pass. And over one in ten schools that would have received a D were upgraded, the vast majority thanks to their high proficiency rates.

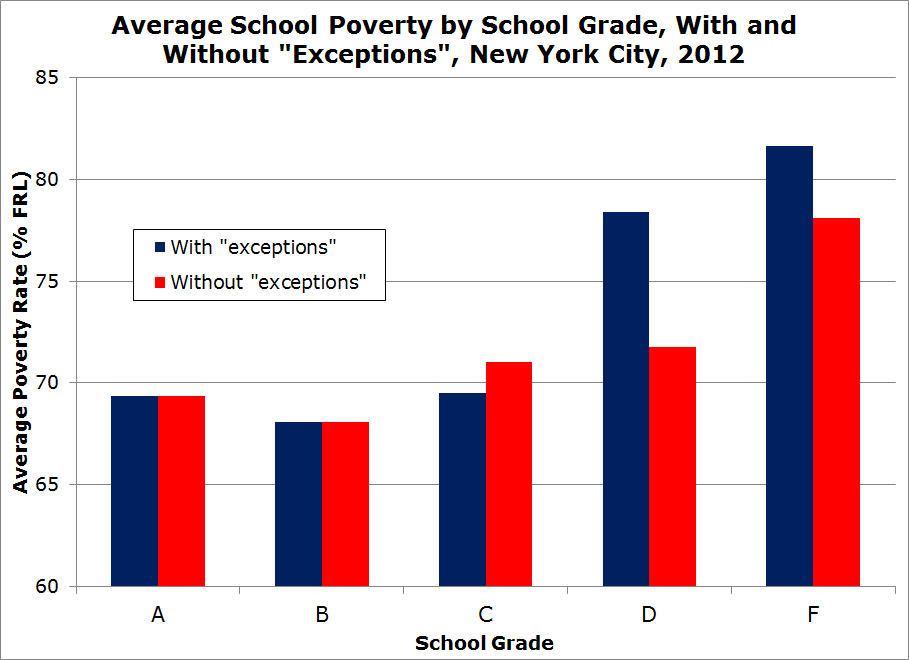

The graph below provides a quick visualization of how these "exceptions" influence the association between poverty (subsidized lunch eligibility) and grades (note that the vertical axis begins at 60 percent FRL, so the variation looks more pronounced than it would in a zero-centered graph).

When the NYC "exceptions" are applied (the actual system), there is a much clearer uptick in the average poverty rates of schools receiving F's and especially D's, compared with the (hypothetical) distribution when these exceptions are not applied. This is because a bunch of lower-poverty schools receiving a D are bumped up to a C, due to their top-third proficiency rates.

This “loophole” is a deliberate choice to have a double standard of sorts - these schools are treated differently than the others, based on a measure (absolute proficiency) that is already a significant factor in the calculation of the scores themselves.

One might argue that if the city is confident enough in its system that the lowest-scoring 10 percent of schools should be subject to a process that might lead to the most extreme possible consequence - closure - then any school receiving such a score should not be given a pass simply because they score well on a component that is already included in the formula. On the other hand, closure is a costly, difficult process, and might be best-directed at schools serving students most in need of improvement.

In either case, the point here is that NYC’s actual rating scores aren’t, on the whole, strongly associated with student characteristics such as poverty, but schools receiving an F do tend to be higher-poverty, and the city's "top third" exception, by choice, exacerbates this association at the business end of the distribution. Roughly 30 percent of the city's schools, the vast majority of which serve lower proportions of disadvantaged children, are effectively exempted from the lowest grades (D and F). These adjustments are important to consider when interpreting schools' category ratings, as well as the manner in which they are linked to consequences.

- Matt Di Carlo

*****

* The final component is 15 points for “school environment," which is based on a combination of attendance rates and surveys of parents, students and teachers. Schools can also earn “extra credit” points for the performance of various student subgroups. This year, the maximum number of bonus points was 16 for elementary and 17 for middle/K-8 schools. As a result, there were a few schools that received final scores slightly above 100. As for the peer group comparisons, I could raise some objections to the manner in which they're identified, but they at least represent an effort to assess schools vis-a-vis comparable counterparts, one that is largely not made by many other states' systems.

** Using multiple years of growth data can certainly help, but even then, the estimates would still be much more unstable overall than absolute performance. NYC most likely passes on pooling data because of their desire to examine year-to-year changes in test-based effectiveness (as measured by their growth model).

*** Many growth models' estimates, including NYC's, are associated with student characteristics, but far less so than proficiency rates. Moreover, due to the manner in which the city converts actual estimates into rating scores (e.g,, peer group comparisons), there is only a very weak relationship between the number of growth points a school receives and its poverty rate.

**** I did this by simply identifying those schools with final scores that were below the D or F thresholds, but did not receive those grades (there are separate cutoff points for elementary, middle and K-8 schools, and they are listed on schools' progress reports).

NOTE TO READERS: Due to a merging error (and my failure to spot this problem), there were a handful of duplicate schools in my dataset. This affected a few of the correlation coefficients in the originally-published version of this post. None of these changes was larger than 0.01, but I updated the text with the corrected figures. I apologize for the careless mistake.

MD