Proficiency Rates And Achievement Gaps

The change in New York State tests, as well as their results, has inevitably resulted in a lot of discussion of how achievement gaps have changed over the past decade or so (and what they look like using the new tests). In many cases, the gaps, and trends in the gaps, are being presented in terms of proficiency rates.

I’d like to make one quick point, which is applicable both in New York and beyond: In general, it is not a good idea to present average student performance trends in terms of proficiency rates, rather than average scores, but it is an even worse idea to use proficiency rates to measure changes in achievement gaps.

Put simply, proficiency rates have a legitimate role to play in summarizing testing data, but the rates are very sensitive to the selection of cut score, and they provide a very limited, often distorted portrayal of student performance, particularly when viewed over time. There are many ways to illustrate this distortion, but among the more vivid is the fact, which we’ve shown in previous posts, that average scores and proficiency rates often move in different directions. In other words, at the school-level, it is frequently the case that the performance of the typical student -- i.e., the average score -- increases while the proficiency rate decreases, or vice-versa.

Unfortunately, the situation is even worse when looking achievement gaps. To illustrate this in a simple manner, let’s take a very quick look at NAEP data (4th grade math), broken down by state, between 2009 and 2011.

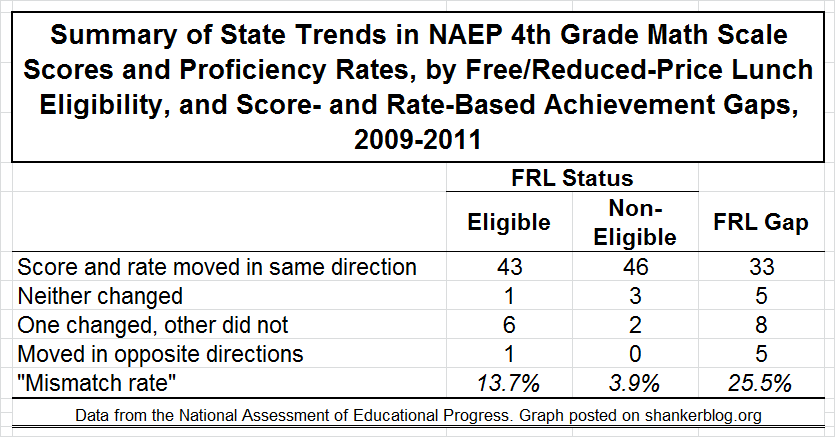

The table below summarizes “score versus rate trends” for three measures. In the first column are the trends in rates and gaps for students eligible for free/reduced-price lunch (FRL), a rough proxy for poverty. The second column is students who are not eligible. And, finally, the third column is the trend in score/rate gaps between these two groups. The numbers in the table represent the number of states.

So, for example, among FRL-eligible students, there were 43 states in which the average NAEP score and the proficiency rate moved in the same direction between 2009 and 2011 – that is, either both increased or both decreased. In one state, neither the rate nor the score changed. And, finally, in seven states, either one moved and the other was flat or the two moved in opposite directions. We might characterize these final two permutations as “mismatches," since the scores and rates tell a different "story." Thus, among FRL-eligible students, there were a few anomalies, but virtually all of them (six out of seven) were situations in which there was a slight change in the score or gap while the other was flat.*

The trends are more consistent among non-eligible students (most likely because the state-level samples are larger, though still far smaller than the typical school). There were only two states that we might classify as “mismatches," and both were “one moved and the other didn’t” situations. So, when we’re looking at simple changes, the rates do often tell a different story than the scores, even across entire states, but there’s far more agreement than disagreement (though these discrepancies are more common among individual schools, as the state-level NAEP samples are larger than that of the typical school).

Now check out the gap trends in the rightmost column. In one out of four states, the scores and rates provide conflicting information about the trend. Most disturbingly, in five states, they move in different directions. One might draw rather different conclusions depending on whether rates or scores are used.

In short, relying on changes in proficiency rates is usually a bad idea. Whenever possible, one should use changes in average scores, as they provide a much more holistic picture of the performance of the “typical student."

However, when we’re talking about trends in achievement gaps, there’s a simple rule: Don't use proficiency rates unless you have absolutely no other option. They are almost guaranteed to be misleading.

- Matt Di Carlo

*****

* Note that, in some of these cases, the change may not be statistically discernible, but that’s not a big problem for the purposes of this discussion.

How does one evaluate a teacher or a school district? To really answer this question you must actually be involved with the school system. Daily examining what progress is made or what is lacking in the total school environment.

The Los Angeles school District has given it's soul to the devil. There is so much corruption within it and it's UTLA that unless you are closely involved in school programs it is hard to tell or know all of the deviance that is working in the city of L.A.

Talk to a senior teacher and you will find that teachers do not run the school system. They are told what they can and can not teach. They blocked many times from helping their students be successful in their own classrooms.

The school board is using money for projects more for themselves then for students. Making this entire educational story look as if it is the teachers. It's not the teachers. It how the school is administrated.

Talk to the EXCELLENT senior teachers who were fired by the School Board because it was stated that they make too much money.

Would you please protect the young teachers who are spending thousands of dollars to be teachers and then finding that made a terrible mistake in just want to "HELP" young children. Just because they as God says "Love your neighbor as yourself"