When Checking Under The Hood Of Overall Test Score Increases, Use Multiple Tools

When looking at changes in testing results between years, many people are (justifiably) interested in comparing those changes for different student subgroups, such as those defined by race/ethnicity or income (subsidized lunch eligibility). The basic idea is to see whether increases are shared between traditionally advantaged and disadvantaged groups (and, often, to monitor achievement gaps).

Sometimes, people take this a step further by using the subgroup breakdowns as a crude check on whether cross-sectional score changes are due to changes in the sample of students taking the test. The logic is as follows: If the increases are found when comparing advantaged and more disadvantaged cohorts, then an overall increase cannot be attributed to a change in the backgrounds of students taking the test, as the subgroups exhibited the same pattern. (For reasons discussed here many times before, this is a severely limited approach.)

Whether testing data are cross-sectional or longitudinal, these subgroup breakdowns are certainly important and necessary, but it's wise to keep in mind that standard variables, such as eligibility for free and reduced-price lunches (FRL), are imperfect proxies for student background (actually, FRL rates aren't even such a great proxy for income). In fact, one might reach different conclusions depending on which variables are chosen. To illustrate this, let’s take a look at results from the Trial Urban District Assessment (TUDA) for the District of Columbia Public Schools between 2011 and 2013, in which there was a large overall score change that received a great deal of media attention, and break the changes down by different characteristics.

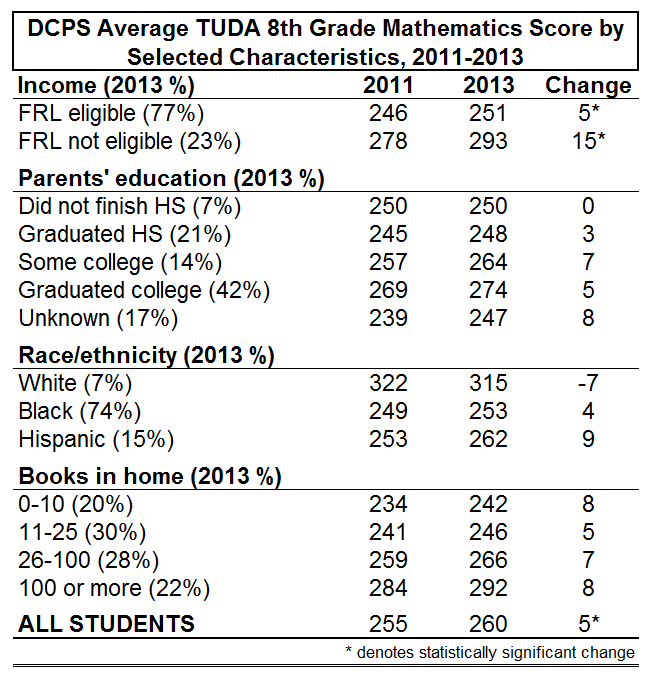

The table below presents average eighth grade math scores of DCPS students in 2011 and 2013. Asterisks denote 2011-2013 changes that are statistically significant, but it’s important to note that many of these estimates in the table are from rather small samples, and statistical significance is to a large extent a function of sample size. Since this exercise is purely illustrative, we might ease our focus on statistical significance a little bit, while still keeping in mind that these are imprecise estimates. It also bears mentioning that score changes are correlated with prior year scores.

If you look at the overall results (“All students," at the bottom), you see that there was a 5 point increase between 2011 and 2013 (this was inappropriately interpreted as policy evidence by those who favor the reforms in DC, but that’s a different story).

Now, if we wanted to see whether that overall increase was shared by advantaged and disadvantaged students, we might disaggregate the scores using different variables that are collected by TUDA and ostensibly capture, at least in some respects, student background/advantage.

Let’s start with free/reduced-price lunch eligibility (FRL), which is by far the most common variable used in disaggregations. There is a very clear discrepancy – the average score among eligible (i.e., lower-income) students increased 5 points, compared with a massive 15 point jump among non-eligible students. This suggests that, on average, eighth graders in 2013 scored higher than their peers in 2011, but that the increase was disproportionately found between the advantaged (i.e., non-FRL-eligible) cohorts. This might lead one to conclude that there were increases all around, but more so for advantaged students. But let's move on and see how things look using other proxies.

Another potentially useful measure of student background is parents’ education (in this case, the highest degree achieved by either parent). This variable is usually not available for state test results (and it is only available in NAEP/TUDA for eighth graders).

If we look at these changes, there was actually no increase between cohorts whose parents did not graduate high school, and a relatively small jump (3 points) between cohorts composed of children of high school graduates. In addition -- and bearing in mind that these are small samples are thus subject to imprecision -- the increase was actually larger between cohorts whose parents have some college, compared with those whose parents graduated college.

So, on the whole, the breakdown by parents’ education is marginally consistent with the FRL results, at least in a broad brush sense, but it is far more nuanced and suggests substantively different (tentative, given the imprecision) conclusions. The FRL breakdown suggested increases among all students, along with weaker but still significant increases among the most advantaged students, whereas the parents' education results indicate no increase among the most disadvantaged students (at least insofar as parental education capture this), and a not-so-discernible pattern as one moves up the categories.

Now, things get even more interesting. The disaggregation by race/ethnicity shows that there was actually a fairly sizable cohort decrease for the traditionally highest-scoring student subgroup (white students), whereas scores for black and Hispanic students went up. This would seem to suggest that the overall increase was concentrated among the disadvantaged cohorts, which is the opposite conclusion from that indicated by the FRL and (to a lesser extent) parental education breakdowns.

(Two side notes: First, notice that the achievement gap defined in terms of FRL eligbility is increasing, while the gap defined in terms of race/ethnicity is shrinking. Second, I am obliged to reiterate, yet again, that these estimates are all based on relatively small samples, and that the white sample in DCPS is particularly tiny. This means that the estimates for this subgroup should be interpreted with even more caution.)

A fourth and final variable that is sometimes used as a proxy for student advantage/background (and, again, one that is almost never available for state testing results) is the number of books in the house. If you look at these results, there were cohort increases in all four categories, and no discernible pattern. This is yet another possible interpretation - that is, the increases were quite evenly distributed.

So, overall, we have four variables that ostensibly capture some aspect of student advantage/background, and four different (illustrative) conclusions about the distribution of overall changes. This is certainly a picture that one might miss if one were just disaggregating by lunch eligibility.

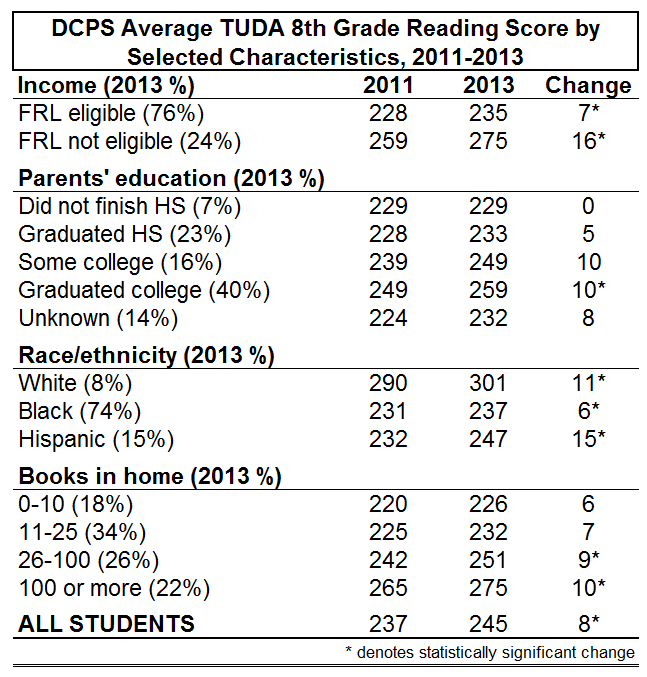

Now, let’s quickly check out the same table for eighth grade reading (I do not present fourth grade results because, as mentioned above, parents’ education is not available).

The situation here is quite similar, with two exceptions. First, the there is a more discernible pattern in the size of the cohort increases as the number of books in the house increases (and some statistically discernible changes). This might be expected given that these are reading results. Nevertheless, statistical significance aside, the relationship is not particularly pronounced (and might even be interpreted as somewhat evenly distributed).

But the big difference is in the results for white students. Whereas, as shown in the first table, math scores decreased for white students between 2011 and 2013, they increased by a similar amount in reading. Notice, however, that the largest increase occurred between Hispanic cohorts, suggesting that the size of the increases do vary meaningfully by subgroup, but are not consistently related to whether or not the subgroup is traditionally higher- or lower-scoring. (And the rather surprising discrepancy between the math and reading changes for white students further illustrates how difficult it can be to draw grand conclusions about the distribution of cohort changes.)

Nevertheless, once again, we have four variables, and four somewhat different impressions of how the changes shake out by subgroups defined in different ways.

This is obviously just one district and just one year-to-year comparison (2011-2013); these results no doubt vary by TUDA district (and by state on NAEP).

However, what these simple comparisons clearly suggest is not controversial – the degree to and manner in which different variables measure student advantage/disadvantage is complicated, and one must be very careful about drawing conclusions regarding whether and how overall changes in performance mask underlying differences. And NAEP/TUDA serve as a good example of how the collection of contextual variables can augment the utility of testing results.

- Matt Di Carlo