The Semantics of Test Scores

Our guest author today is Jennifer Borgioli, a Senior Consultant with Learner-Centered Initiatives, Ltd., where she supports schools with designing performance based assessments, data analysis, and curriculum design.

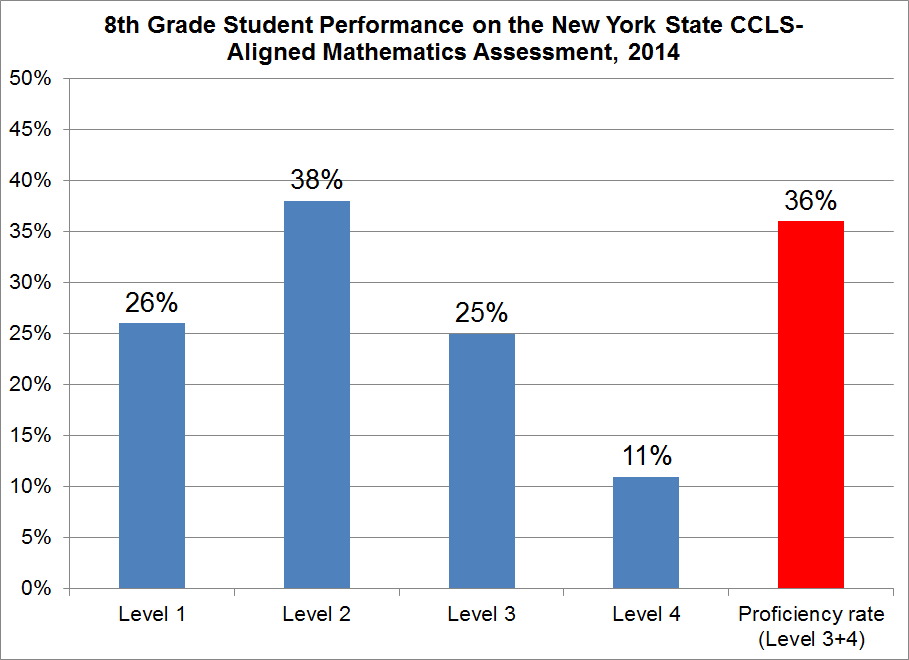

The chart below was taken from the 2014 report on student performance on the Grades 3-8 tests administered by the New York State Department of Education.

Based on this chart, which of the following statements is the most accurate?

A. “64 percent of 8th grade students failed the ELA test”

B. “36 percent of 8th graders are at grade level in reading and writing”

C. “36 percent of students meet or exceed the proficiency standard (Level 3 or 4) on the Grade 8 CCLS-aligned math test”

Seems like a trick question, doesn’t it? It’s almost as if all three choices are basically saying the same thing. Both A and B are common refrains in different education circles, and C sounds a bit like bureaucratic word-smithing. So, like any good test taker, let’s make sure we understand the difference between the choices before picking our answer.

Statement A is perhaps the most common format for these types of descriptions. It is often used as a talking point of sorts, one that usually consists of two elements – a shockingly large number and some variation of fail,failure or the inverse, “passing." Before we pick A as the most “accurate” choice, however, we have to tap some of our background knowledge and consider what it means to pass or fail a test.

Generally speaking, when we talk about a test where the resulting score is described as passing or failing, it’s in relation to the consequences for the test taker. Fail your driver’s test? You can’t drive. Pass your boards? Welcome to the profession, Doctor. Both of these examples are clear pass/fail tests. In contrast, after taking the Armed Services Vocational Aptitude Battery future armed service members don’t tell their parents they passed. Similarly, while I wasn’t crazy about my scores, I didn’t “fail” the GRE the first time I took it.

Testing in New York State provides an example of how these two frameworks co-exist in one system. In NYS, students in public education take assessments in grades 3-8, and then again following completion of High School courses. These commencement exams, known as Regents, cover mathematics, ELA, Science, and Social Studies and have been graduation requirements for decades.

If a student does not achieve a particular score on the required Regents exams within four years, he or she will not graduate high school “on time." For each Regents exam, and each student taking the exam, there is a bright line of cause and effect: Score below 64? The student fails the exam, he or she will have to retake it, and, if unsuccessful, may need to retake the course (students can also challenge the grade, but do not get credit toward graduation if the challenge is unsuccessful).

Score at or above 65? Congratulations! The student can take the next course on that particular path, check off a box indicating she is on track to graduate, and has her summer to herself. While there are larger, long-term consequences to the school, the district, and the community based on how that individual student did on the Regents, there are explicit, well-known short-term consequences to the test taker and her family.

This bright line does not exist for the tests administered in grades 3-8, in mathematics and ELA, in compliance with No Child Left Behind mandates. There are no short-term negative consequences for students in grades 3-8 based on their performance on the state assessments. A child may be enrolled in Academic Interview Services based on the district's “response to intervention” structure. He or she may be considered for an honors-level course based on his or her performance, depending on the district’s criteria for course enrollment. However, no student in New York State can be held back as a result of performance on the state assessments, now that NYC has changed its policy to better match state-wide practices and NYSED policy.

So, while there is once again the possibility of larger, longer-term consequences to the school, the district, and the community based on how a group of students do on these tests, in the absence of a clear and bright line of a relationship between student performance on the test and consequences to the student, it’s misleading to say the student “failed” the test.

B is a little bit closer to what the chart is displaying but it’s still not quite there. “Grade level” can be classified as loaded language, making B suspect. While the framing of the New York State performance levels does reference grade level -- "Students performing at this level are proficient in standards for their grade" -- the reference to the standards for their grade level is key. NYS tests aren't supposed to correspond to grade level per se, except insofar as the cuts reflect minimum standards by grade. The difference is like saying that a basketball player is ready for the WNBA because her blood work shows her cholesterol levels are within normal levels for her age. In other words, the phrase “on grade level” is large, imprecise, and not what the state assessments intend to measure.

Ask a 4th grade teacher on Long Island what “grade level” writing looks like and then share that explanation with a 4th grade teacher in Watertown. Their answers may be very different – possibly because of the terms and jargon each teacher uses or the particular district-specific foci (Writer’s Workshop versus Six Traits). So, while B may be appealing– it seems like the right answer – it’s not precise enough.

As you may have guessed already, C, though boring and jargon-laden, is the correct answer here. There is no cause and effect implied in the statement. The language describing the measurement tool (the test) is precise. It’s clear to all readers what is being measured and how it is being measured. No rhetorical device, no argument, just an explanation of the data. That said, writers that use C aren’t endorsing a testing “regime”, spreading propaganda, or even supporting Education Reform. They are simply describing what the data show.

Does it matter?

Odds are the answer to question “Does it matter?" will depend on where you sit within the system and your purpose when using particular words. Advocates for school reform or those who are unhappy with aspects of the system are, in general, likely to frame schools as doing poorly. If, on the other hand, you are a principal, sitting across from a parent who is trying to understand her child’s test scores, you’ll likely use the more unbiased language.

When it comes to communicating about testing, accuracy trumps intention, no matter how noble that intention might be. As an advocate for performance-based and curriculum-embedded assessments, I’d be happy to use whatever language it takes to get the conversation focused on more meaningful and accurate evidence of student learning. Yet, at what cost? Tests are part of the teaching profession. Don’t we have an obligation to use the language of our profession when discussing technical matters within the educational landscape? What is lost - or gained - by using more or less precise language? Does using imprecise language provide parents the clarity they need to understand the role of assessments? Is it really worth describing 68% of NYS students as “failures” or below “grade level” when those descriptions are not appropriate or even downright inaccurate?

Testing in grades 3-8 isn’t likely to go away until NCLB is repealed or reauthorized. Consider, therefore, what might happen if educators and especially advocates writing about education began to temper their language with accurate terms and specific terminology. Rather than talking about “failing kids," we can start to talk about alternatives within the system.

One can almost excuse the media for using imprecise language. After all, who wouldn’t be compelled to read a story about how 65% of students “failed” a test? Yet, in the absence of this shift in language among educators, it’s likely we’re going to start another year re-affirming confirmation biases, filling up echo chambers with the same phrases and frustration, and causing yet another group of parents to wonder how it is that their 8-year-old can be labeled a failure by a system they’ve only barely entered.

- Jennifer Borgioli

Typical obfuscation of the data. It is the inverse of Wilde's remark, "it is not enough that I succeed, others must fail."

The fact is that the current regime encourages mediocrity, failure and stasis. The ONLY solution to our current educational crisis (other than importing 2 million orientals) is to destroy the Teachers Union and nearly every Teachers ED curriculum in the US