Data Driving: At The Intersection Of Arbitrary And Meaningful

In his State of the City address last month, New York City Mayor Michael Bloomberg made some brief comments about the upcoming adoption of new assessments aligned with the Common Core State Standards (CCSS), including the following statement:

But no matter where the definition of proficiency is arbitrarily set on the new tests, I expect that our students’ progress will continue outpacing the rest of the State’s[,] the only meaningful measurement of progress we have.On the surface, this may seem like just a little bit of healthy bravado. But there are a few things about this single sentence that struck me, and it also helps to illustrate an important point about the relationship between standards and testing results.

The first thing to note is the reference to the definition of proficiency – i.e., the choice of cut score above which students are deemed “proficient” – as “arbitrary." On the one hand, Mayor Bloomberg is absolutely correct. The specification of cut scores, to put it mildly, is not an exact science.

On the other hand, the dismissal of these threshold choices as “arbitrary” is more than a little strange coming from a mayor who relies so heaviliy on proficiency rates to judge the performance of schools. Indeed, in the very same sentence, he even refers to the changes in these rates vis-à-vis the state’s as “the only meaningful measure of progress we have."

If proficiency thresholds are “arbitrary," how can they be the basis for the “only meaningful measure” the city has?

The plausible explanation for this seemingly contradictory statement is that Mayor Bloomberg thinks that changes in proficiency rates bear no relationship to where the proficiency line is set. In other words, he assumes that adopting the new standards will cause a downward shift in proficiency rates in the first year, but will have no impact on rate changes going forward (or on the comparison of the city's changes with those of the state). I suspect that this belief is common.

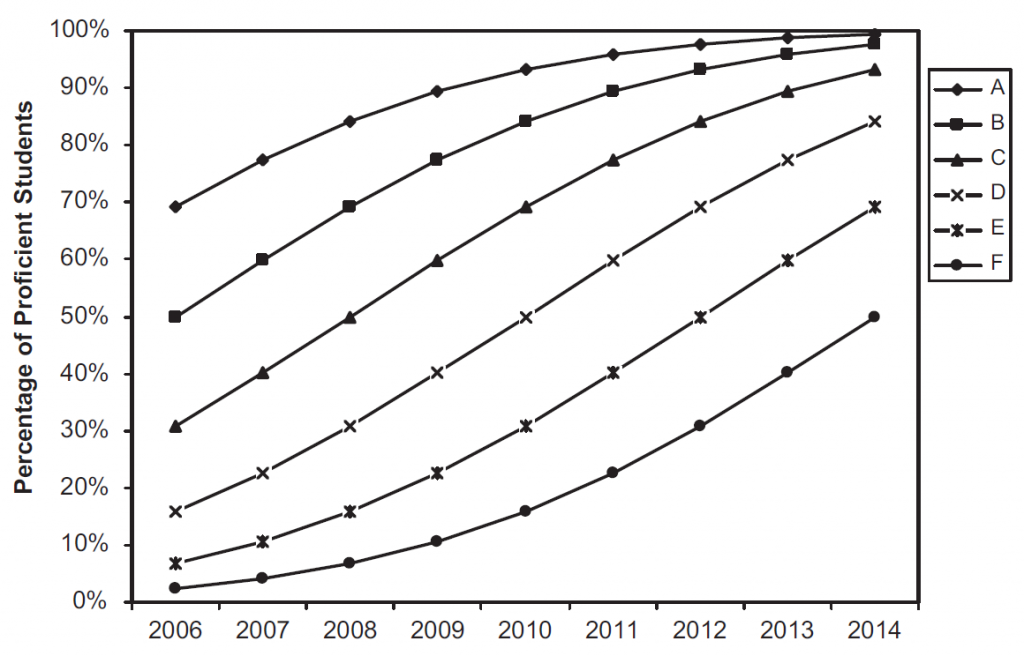

In reality, though, even if the tests themselves don't change in terms of content, where one sets the bar by itself can have a big impact on trajectories. To illustrate this, the graph below is taken from this terrific article by Andrew Dean Ho, which was published in the journal Educational Researcher.

Here we have six illustrative states in which the bar for proficiency is set at different levels. State A has the lowest cut score and thus the highest proficiency rates, while the opposite is true for state F.

The lines represent each state’s simulated trajectory between 2006 and 2014. Clearly, these states are on different paths. For instance, states A and B show huge increases in the first few years, and then level off. In contrast, the rates of states E and F are quite flat at first, and then increase sharply. As Ho notes, these results might lead to much speculation about which policy changes served to bring about such meaningful differences.

The kicker, in case you haven’t already guessed, is that these six “states” are the same state, using the exact same dataset of scores. The only difference between them is the proficiency cut score. The primary reason the trends look so different is that the distribution of students’ scores around the proficiency line plays a substantial role in shaping the trajectory of changes in proficiency rates. If you change the line, you change the trajectory, whether for a school, district or entire state.

Thus, the effect of adopting the CCSS on proficiency rates will not be a one-shot deal, whether in New York or elsewhere. It will be permanent.

Now, back to the mayor's statement: If you fail to acknowledge that the choice of thresholds affects trajectories, or that rates and average scores often move in different directions, or that year-to-year cohort changes represent comparisons between two different groups of students, you might very well think that rate changes are the "only meaningful measure of progress we have," when in reality they are not "progress" measures at all, and aren't particularly meaningful when assessing district or school performance.

In fairness, I'm nitpicking this one sentence - Mayor Bloomberg has a day job, to say the least, and strong familiarity with the fine-grained details of educational measurement is not among the requirements for that position. That said, these kinds of misconceptions, though common, are still a little disconcerting coming from individuals who are in charge of school systems, especially when, like Mayor Bloomberg, they are strong advocates for the high-stakes use of testing data.

As I’ve said before, I would have a lot more faith in “data-driven decision making” if the people making the decisions had a little more time behind the wheel.

NOTE TO READERS:

This post's title was originally "Data Driving Under The Influence." That didn't convey the meaning I was trying to convey, and, more importantly, it was tasteless (this is why I usually stick with boring titles).

I apologize, and have changed the title.

MD

When Michael Bloomberg first ran for mayor, he told voters that he wanted to be judged on the progress of the schools.

At his urging, the state of New York essentially replaced the elected school board(s) with mayoral control, in New York City.

Yes, Bloomberg has a day job, and he told his constituents that his performance in this job should be judged based upon the progress the schools made.

This is not some minor or tangential issue. How we recognize or judge the progress of the schools is absolutely central to how this mayor said we should evaluate him. I, therefore, have rather little doubt that he understands all this. And if he does not, that reveals a degree of ignorance and thoughtlessness about a central issue that is -- in view far -- far worse than spouting a bunch of blather about what will happen with the new CCSS tests. As he is now long past the legal limits of his terms in office that were in place when he was first elected, there would be no excuse for such ignorance.

Hi Matt,

This is my first time reading your blog, so forgive me if this is a bit more general of a response to your comments, or if you've addressed this previously.

I think it's important to understand that "data-driven decision-making" in education doesn't really have its roots in the accountability movement, and really can't be fairly summarized by its use in that department. Data-driven instruction/decision-making is really more of a concept designed to allow educators - from classroom to district or even state levels - to make better decisions related to instruction and education overall.

This may seem like an obvious statement, but the reason I'm taking the time to write this comment is that I think certain ideas are being vastly misrepresented by anti-reformers, and I think it's important to continue to defend things like "data-driven decision-making" so that educators don't feel as though that's a concept based in corporate reform, designed to evaluate teachers based on year-end tests. What I've noticed is that certain concepts, including the idea of "reform" itself, has left many with a bad taste in their mouth because they've seen some pretty bad examples recently. The truth is that there are many struggling students and many struggling schools, and that we DO need to be focused on continuous improvement. Data-driven decision-making has been historically used by most educators, at least in a way that makes a lot of sense, and I think it's important that we don't throw out concepts such as "data-driven decision-making" because a few people we don't like have used it in a few ways we don't like.

I know that in the process of day-to-day blogging it may be cumbersome to remind folks of those bigger picture issues, but I think it's important at least some of the time to frame things in appropriate contexts. Maybe you do this and I've just stumbled on a non-example, but including phrases like "we do value data-driven decision-making, but here's an example of how it's being misused" would be immensely valuable so that the bandwagon of educators enlisted in the anti-reform movement aren't misled to think any and all new ideas that come about are inherently bad unless they were developed by and for teachers.

Thanks Matt.